Here is an interview with Elissa Fink, VP Marketing of that new wonderful software called Tableau that makes data visualization so nice and easy to learn and work with.

Ajay- Describe your career journey from high school to over 20 plus years in marketing. What are the various trends that you have seen come and go in marketing.

Elissa- I studied literature and linguistics in college and didn’t discover analytics until my first job selling advertising for the Wall Street Journal. Oddly enough, the study of linguistics is not that far from decision analytics: they both are about taking a structured view of information and trying to see and understand common patterns. At the Journal, I was completely captivated analyzing and comparing readership data. At the same time, the idea of using computers in marketing was becoming more common. I knew that the intersection of technology and marketing was going to radically change things – how we understand consumers, how we market and sell products, and how we engage with customers. So from that point on, I’ve always been focused on technology and marketing, whether it’s working as a marketer at technology companies or applying technology to marketing problems for other types of companies. There have been so many interesting trends. Taking a long view, a key trend I’ve noticed is how marketers work to understand, influence and motivate consumer behavior. We’ve moved marketing from where it was primarily unpredictable, qualitative and aimed at talking to mass audiences, where the advertising agency was king. Now it’s a discipline that is more data-driven, quantitative and aimed at conversations with individuals, where the best analytics wins. As with any trend, the pendulum swings far too much to either side causing backlashes but overall, I think we are in a great place now. We are using data-driven analytics to understand consumer behavior. But pure analytics is not the be-all, end-all; good marketing has to rely on understanding human emotions, intuition and gut feel – consumers are far from rational so taking only a rational or analytical view of them will never explain everything we need to know.

Ajay- Do you think technology companies are still predominantly dominated by men . How have you seen diversity evolve over the years. What initiatives has Tableau taken for both hiring and retaining great talent.

Elissa- The thing I love about the technology industry is that its key success metrics – inventing new products that rapidly gain mass adoption in pursuit of making profit – are fairly objective. There’s little subjective nature to the counting of dollars collected selling a product and dollars spent building a product. So if a female can deliver a better product and bigger profits faster and better, then that female is going to get the resources, jobs, power and authority to do exactly that. That’s not to say that the technology industry is gender-blind, race-blind, etc. It isn’t – technology is far from perfect. For example, the industry doesn’t have enough diversity in positions of power. But I think overall, in comparison to a lot of other industries, it’s pretty darn good at giving people with great ideas the opportunities to realize their visions regardless of their backgrounds or characteristics.

At Tableau, we are very serious about bringing in and developing talented people – they are the key to our growth and success. Hiring is our #1 initiative so we’ve spent a lot of time and energy both on finding great candidates and on making Tableau a place that they want to work. This includes things like special recruiting events, employee referral programs, a flexible work environment, fun social events, and the rewards of working for a start-up. Probably our biggest advantage is the company itself – working with people you respect on amazing, cutting-edge products that delight customers and are changing the world is all too rare in the industry but a reality at Tableau. One of our senior software developers put it best when he wrote “The emphasis is on working smarter rather than longer: family and friends are why we work, not the other way around. Tableau is all about happy, energized employees executing at the highest level and delivering a highly usable, high quality, useful product to our customers.” People who want to be at a place like that should check out our openings at http://www.tableausoftware.com/jobs.

Ajay- What are most notable features in tableau’s latest edition. What are the principal software that competes with Tableau Software products and how would you say Tableau compares with them.

Elissa- Tableau 6.1 will be out in July and we are really excited about it for 3 reasons.

First, we’re introducing our mobile business intelligence capabilities. Our customers can have Tableau anywhere they need it. When someone creates an interactive dashboard or analytical application with Tableau and it’s viewed on a mobile device, an iPad in particular, the viewer will have a native, touch-optimized experience. No trying to get your fingertips to act like a mouse. And the author didn’t have to create anything special for the iPad; she just creates her analytics the usual way in Tableau. Tableau knows the dashboard is being viewed on an iPad and presents an optimized experience.

Second, we’ve take our in-memory analytics engine up yet another level. Speed and performance are faster and now people can update data incrementally rapidly. Introduced in 6.0, our data engine makes any data fast in just a few clicks. We don’t run out of memory like other applications. So if I build an incredible dashboard on my 8-gig RAM PC and you try to use it on your 2-gig RAM laptop, no problem.

And, third, we’re introducing more features for the international markets – including French and German versions of Tableau Desktop along with more international mapping options. It’s because we are constantly innovating particularly around user experience that we can compete so well in the market despite our relatively small size. Gartner’s seminal research study about the Business Intelligence market reported a massive market shift earlier this year: for the first time, the ease-of-use of a business intelligence platform was more important than depth of functionality. In other words, functionality that lots of people can actually use is more important than having sophisticated functionality that only specialists can use. Since we focus so heavily on making easy-to-use products that help people rapidly see and understand their data, this is good news for our customers and for us.

Ajay- Cloud computing is the next big thing with everyone having a cloud version of their software. So how would you run Cloud versions of Tableau Server (say deploying it on an Amazon Ec2 or a private cloud)

Elissa- In addition to the usual benefits espoused about Cloud computing, the thing I love best is that it makes data and information more easily accessible to more people. Easy accessibility and scalability are completely aligned with Tableau’s mission. Our free product Tableau Public and our product for commercial websites Tableau Digital are two Cloud-based products that deliver data and interactive analytics anywhere. People often talk about large business intelligence deployments as having thousands of users. With Tableau Public and Tableau Digital, we literally have millions of users. We’re serving up tens of thousands of visualizations simultaneously – talk about accessibility and scalability! We have lots of customers connecting to databases in the Cloud and running Tableau Server in the Cloud. It’s actually not complex to set up. In fact, we focus a lot of resources on making installation and deployment easy and fast, whether it’s in the cloud, on premise or what have you. We don’t want people to have spend weeks or months on massive roll-out projects. We want it to be minutes, hours, maybe a day or 2. With the Cloud, we see that people can get started and get results faster and easier than ever before. And that’s what we’re about.

Ajay- Describe some of the latest awards that Tableau has been wining. Also how is Tableau helping universities help address the shortage of Business Intelligence and Big Data professionals.

Elissa-Tableau has been very fortunate. Lately, we’ve been acknowledged by both Gartner and IDC as the fastest growing business intelligence software vendor in the world. In addition, our customers and Tableau have won multiple distinctions including InfoWorld Technology Leadership awards, Inc 500, Deloitte Fast 500, SQL Server Magazine Editors’ Choice and Community Choice awards, Data Hero awards, CODiEs, American Business Awards among others. One area we’re very passionate about is academia, participating with professors, students and universities to help build a new generation of professionals who understand how to use data. Data analysis should not be exclusively for specialists. Everyone should be able to see and understand data, whatever their background. We come from academic roots, having been spun out of a Stanford research project. Consequently, we strongly believe in supporting universities worldwide and offer 2 academic programs. The first is Tableau For Teaching, where any professor can request free term-length licenses of Tableau for academic instruction during his or her courses. And, we offer a low-cost Student Edition of Tableau so that students can choose to use Tableau in any of their courses at any time.

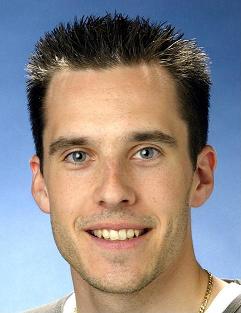

Elissa Fink, VP Marketing,Tableau Software

Elissa Fink is Tableau Software’s Vice President of Marketing. With 20+ years helping companies improve their marketing operations through applied data analysis, Elissa has held executive positions in marketing, business strategy, product management, and product development. Prior to Tableau, Elissa was EVP Marketing at IXI Corporation, now owned by Equifax. She has also served in executive positions at Tele Atlas (acquired by TomTom), TopTier Software (acquired by SAP), and Nielsen/Claritas. Elissa also sold national advertising for the Wall Street Journal. She’s a frequent speaker and has spoken at conferences including the DMA, the NCDM, Location Intelligence, the AIR National Forum and others. Elissa is a graduate of Santa Clara University and holds an MBA in Marketing and Decision Systems from the University of Southern California.

Elissa first discovered Tableau late one afternoon at her previous company. Three hours later, she was still “at play” with her data. “After just a few minutes using the product, I was getting answers to questions that were taking my company’s programmers weeks to create. It was instantly obvious that Tableau was on a special mission with something unique to offer the world. I just had to be a part of it.”

To know more – read at http://www.tableausoftware.com/

and existing data viz at http://www.tableausoftware.com/learn/gallery

Storm seasons: measuring and tracking key indicators

|

What’s happening with local real estate prices?

|

How are sales opportunities shaping up?

|

Identify your best performing products

|

|

|

|

|

|

|

|

|

Applying user-defined parameters to provide context

|

Not all tech companies are rocket ships

|

What’s really driving the economy?

|

Considering factors and industry influencers

|

The complete orbit along the inside, or around a fixed circle

|

How early do you have to be at the airport?

|

What happens if sales grow but so does customer churn?

|

What are the trends for new retail locations?

|

How have student choices changed?

|

Do patients who disclose their HIV status recover better?

|

Closer look at where gas prices swing in areas of the U.S.

|

U.S. Census data shows more women of greater age

|

Where do students come from and how does it affect their grades?

|

Tracking customer service effectiveness

|

Comparing national and local test scores

|

What factors correlate with high overall satisfaction ratings?

|

|

|

Fund inflows largely outweighed outflows well after the bubble

|

Which programs are competing for federal stimulus dollars?

|

Oil prices and volatility

|

A classic candlestick chart

|

How do oil, gold and CPI relate to the GDP growth rate?

|