Here is an interview with one of the younger researchers and rock stars of the R Project, John Myles White, co-author of Machine Learning for Hackers.

Ajay- What inspired you guys to write Machine Learning for Hackers. What has been the public response to the book. Are you planning to write a second edition or a next book?

John-We decided to write Machine Learning for Hackers because there were so many people interested in learning more about Machine Learning who found the standard textbooks a little difficult to understand, either because they lacked the mathematical background expected of readers or because it wasn’t clear how to translate the mathematical definitions in those books into usable programs. Most Machine Learning books are written for audiences who will not only be using Machine Learning techniques in their applied work, but also actively inventing new Machine Learning algorithms. The amount of information needed to do both can be daunting, because, as one friend pointed out, it’s similar to insisting that everyone learn how to build a compiler before they can start to program. For most people, it’s better to let them try out programming and get a taste for it before you teach them about the nuts and bolts of compiler design. If they like programming, they can delve into the details later.

We once said that Machine Learning for Hackers is supposed to be a chemistry set for Machine Learning and I still think that’s the right description: it’s meant to get readers excited about Machine Learning and hopefully expose them to enough ideas and tools that they can start to explore on their own more effectively. It’s like a warmup for standard academic books like Bishop’s.

The public response to the book has been phenomenal. It’s been amazing to see how many people have bought the book and how many people have told us they found it helpful. Even friends with substantial expertise in statistics have said they’ve found a few nuggets of new information in the book, especially regarding text analysis and social network analysis — topics that Drew and I spend a lot of time thinking about, but are not thoroughly covered in standard statistics and Machine Learning undergraduate curricula.

I hope we write a second edition. It was our first book and we learned a ton about how to write at length from the experience. I’m about to announce later this week that I’m writing a second book, which will be a very short eBook for O’Reilly. Stay tuned for details.

Ajay- What are the key things that a potential reader can learn from this book?

John- We cover most of the nuts and bolts of introductory statistics in our book: summary statistics, regression and classification using linear and logistic regression, PCA and k-Nearest Neighbors. We also cover topics that are less well known, but are as important: density plots vs. histograms, regularization, cross-validation, MDS, social network analysis and SVM’s. I hope a reader walks away from the book having a feel for what different basic algorithms do and why they work for some problems and not others. I also hope we do just a little to shift a future generation of modeling culture towards regularization and cross-validation.

Ajay- Describe your journey as a science student up till your Phd. What are you current research interests and what initiatives have you done with them?

John-As an undergraduate I studied math and neuroscience. I then took some time off and came back to do a Ph.D. in psychology, focusing on mathematical modeling of both the brain and behavior. There’s a rich tradition of machine learning and statistics in psychology, so I got increasingly interested in ML methods during my years as a grad student. I’m about to finish my Ph.D. this year. My research interests all fall under one heading: decision theory. I want to understand both how people make decisions (which is what psychology teaches us) and how they should make decisions (which is what statistics and ML teach us). My thesis is focused on how people make decisions when there are both short-term and long-term consequences to be considered. For non-psychologists, the classic example is probably the explore-exploit dilemma. I’ve been working to import more of the main ideas from stats and ML into psychology for modeling how real people handle that trade-off. For psychologists, the classic example is the Marshmallow experiment. Most of my research work has focused on the latter: what makes us patient and how can we measure patience?

Ajay- How can academia and private sector solve the shortage of trained data scientists (assuming there is one)?

John- There’s definitely a shortage of trained data scientists: most companies are finding it difficult to hire someone with the real chops needed to do useful work with Big Data. The skill set required to be useful at a company like Facebook or Twitter is much more advanced than many people realize, so I think it will be some time until there are undergraduates coming out with the right stuff. But there’s huge demand, so I’m sure the market will clear sooner or later.

The changes that are required in academia to prepare students for this kind of work are pretty numerous, but the most obvious required change is that quantitative people need to be learning how to program properly, which is rare in academia, even in many CS departments. Writing one-off programs that no one will ever have to reuse and that only work on toy data sets doesn’t prepare you for working with huge amounts of messy data that exhibit shifting patterns. If you need to learn how to program seriously before you can do useful work, you’re not very valuable to companies who need employees that can hit the ground running. The companies that have done best in building up data teams, like LinkedIn, have learned to train people as they come in since the proper training isn’t typically available outside those companies.

Of course, on the flipside, the people who do know how to program well need to start learning more about theory and need to start to have a better grasp of basic mathematical models like linear and logistic regressions. Lots of CS students seem not to enjoy their theory classes, but theory really does prepare you for thinking about what you can learn from data. You may not use automata theory if you work at Foursquare, but you will need to be able to reason carefully and analytically. Doing math is just like lifting weights: if you’re not good at it right now, you just need to dig in and get yourself in shape.

About-

John Myles White is a Phd Student in Ph.D. student in the

Princeton Psychology Department, where he studies human decision-making both theoretically and experimentally. Along with the political scientist

Drew Conway, he is the author of a book published by

O’Reilly Media entitled “Machine Learning for Hackers”, which is meant to introduce experienced programmers to the machine learning toolkit. He is also working with

Mark Hansenon a book for laypeople about exploratory data analysis.John is the lead maintainer for several

R packages, including

ProjectTemplate and

log4r.

(TIL he has played in several rock bands!)

—–

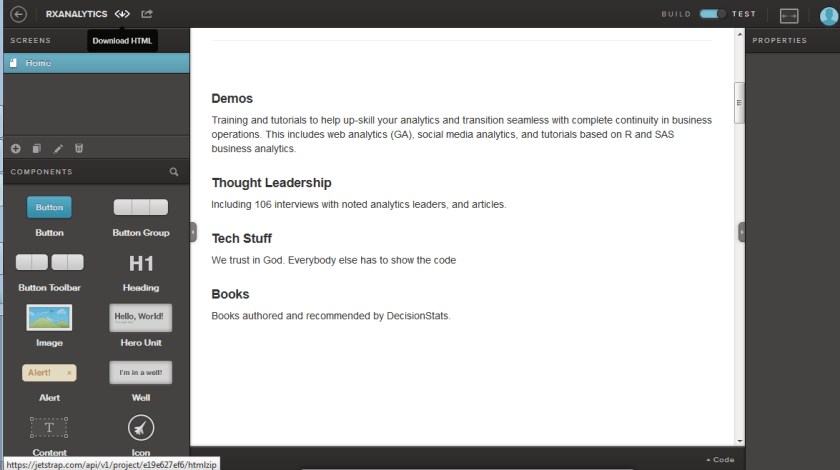

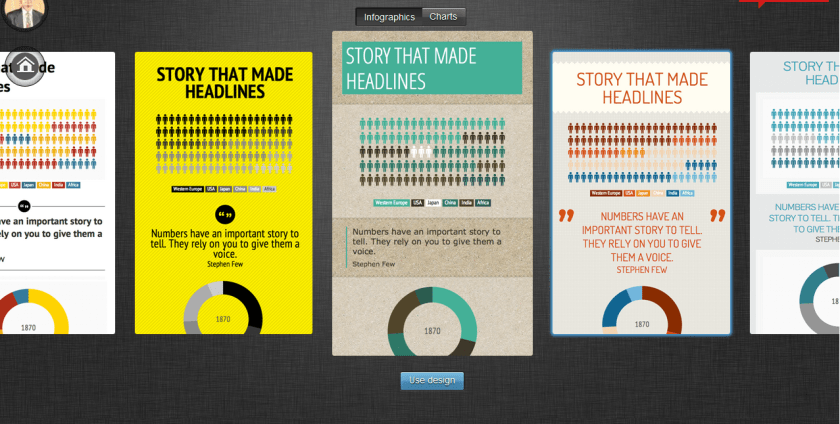

Choose New Infographic or New Chart?

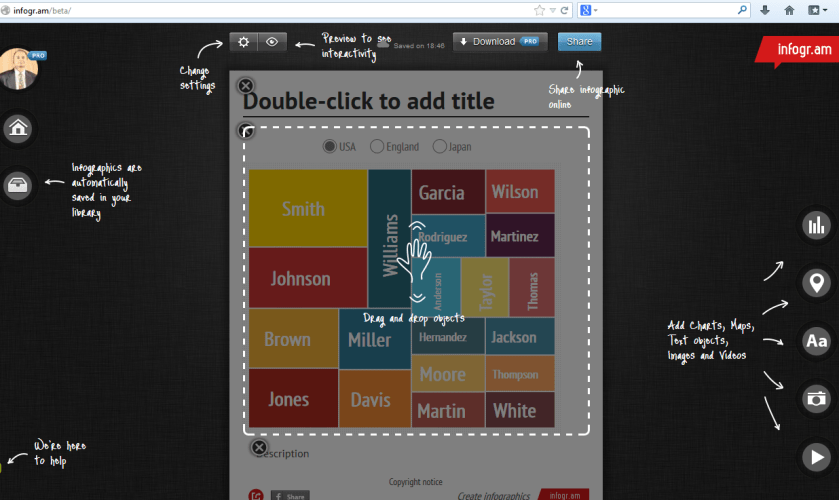

Choose New Infographic or New Chart? Create using options-you can edit the table, figures, text, colors etc

Create using options-you can edit the table, figures, text, colors etc