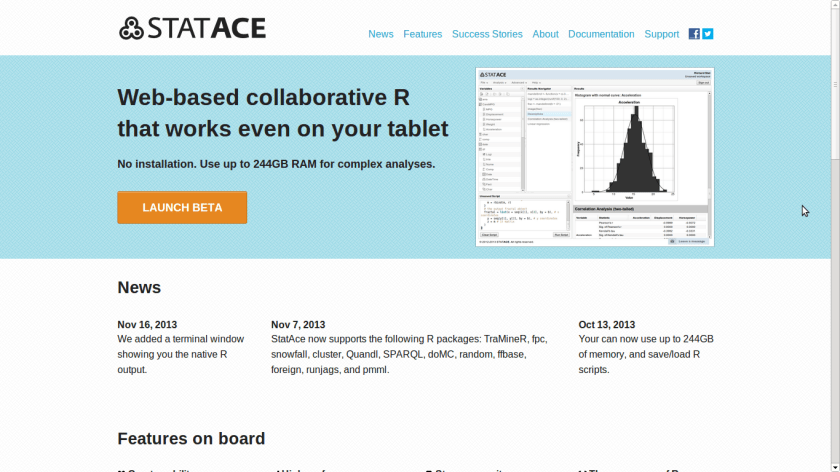

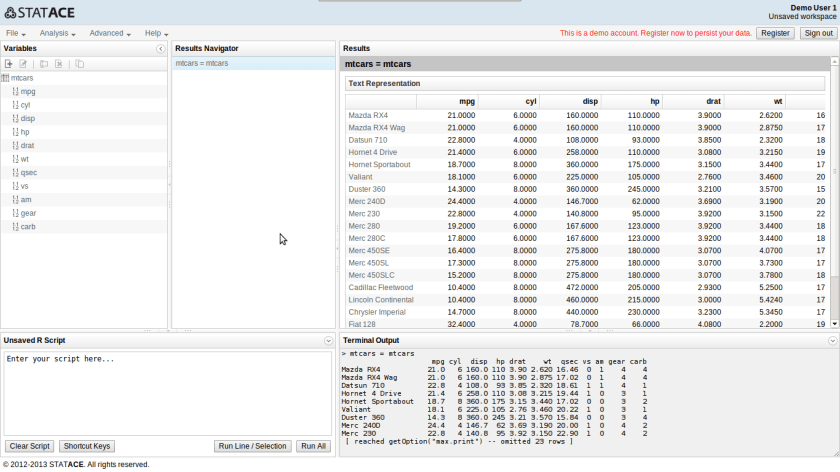

Here is an interview with Christian Mladenov, CEO of Statace , a hot startup in cloud based data science and statistical computing.

Ajay Ohri (AO)- What is the difference between using R by StatAce and using R by RStudio on a R Studio server hosted on Amazon EC2

Christian Mladenov (CM)- There are a few ways in which I think StatAce is better:

-

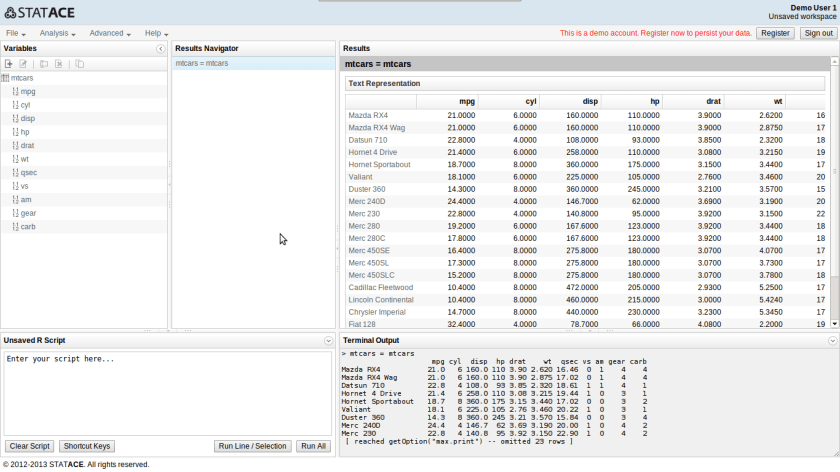

You do not need the technical skills to set up a server. You can instead start straight away at the click of a button.

-

You can save the full results for later reference. With an RStudio server you need to manually save and organize the text output and the graphics.

-

We are aiming to develop a visual interface for all the standard stuff. Then you will not need to know R at all.

-

We are developing features for collaboration, so that you can access and track changes to data, scripts and results in a team. With an RStudio server, you manage commits yourself, and Git is not suitable for large data files.

AO- How do you aim to differentiate yourself from other providers of R based software including Revolution, RStudio, Rapporter and even Oracle R Enterprise

CM- We aim to build a scalable, collaborative and easy to use environment. Pretty much everything else in the R ecosystem is lacking one, if not two of these. Most of the GUIs lack a visual way of doing the standard analyses. The ones that have it (e.g. Deducer) have a rather poor usability. Collaboration tools are hardly built in. RStudio has Git integration, but you need to set it up yourself, and you cannot really track large source data in Git.

Revolution Analytics have great technology, but you need to know R and you need to know how to maintain servers for large scale work. It is not very collaborative and can become quite expensive.

Rapporter is great for generating reports, but it is not very interactive – editing templates is a bit cumbersome if you just need to run a few commands. I think it wants to be the place to go to after you have finalized the development of the R code, so that you can share it. Right now, I also do not see the scalability.

With Oracle R Enterprise you again need to know R. It is a targeted at large enterprises and I imagine it is quite expensive, considering it only works with Oracle’s database. For that you need an IT team.

AO- How do you see the space for using R on a cloud?

CM- I think this is an area that has not received enough quality attention – there are some great efforts (e.g. ElasticR), but they are targeted at experienced R users. I see a few factors that facilitate the migration to the cloud:

-

Statisticians collaborate more and more, which means they need to have a place to share data, scripts and results.

-

The number of devices people use is increasing, and now frequently includes a tablet. Having things accessible through the web gives more freedom.

-

More and more data lives on servers. This is both because it is generated there (e.g. click streams) and because it is too big to fit on a user’s PC (e.g. raw DNA data). Using it where it already is prevents slow download/upload.

-

Centralizing data, scripts and results improves compliance (everybody knows where it is), reproducibility and reliability (it is easily backed up).

For me, having R to the cloud is a great opportunity.

AO- What are some of the key technical challenges you currently face and are seeking to solve for R based cloud solutions

CM- Our main challenge is CPU use, since cloud servers typically have multiple slow cores and R is mostly single-threaded. We have yet to fully address that and are actively following the projects that aim to improve R’s interpreter – pqR, Renjin, Riposte, etc. One option is to move to bare metal servers, but then we will lose a lot of flexibility.

Another challenge is multi-server processing. This is also an area of progress where we have do not yet have a stable solution.

AO- What are some of the advantages and disadvantages of being a Europe based tech startup vis a vis a San Fransisco based tech startup

CM-In Eastern Europe at least, you can live quite cheaply, therefore you can better focus on the product and the customers. In the US you need to spend a lot of time courting investors.

Eastern Europe also has a lot of technical talent – it is not that difficult or expensive to hire experienced engineers.

The disadvantages are many, and I think they out-weigh the advantages:

-

Capital is scarce, especially after the seed stage. This means startups either have to focus on profit which limits their ability to execute a grander vision or they need to move to the US which wastes a lot of time and resources.

-

There is limited access to customers, partners, mentors and advisors. Most of the startup innovation happens in the US and its users prefer to deal with local companies.

-

The environment in Europe is not as supportive in terms of events, media coverage, and even social acceptance. In many countries founders are viewed with a bit of suspicion, and failure frequently means the end to one’s credibility.

AO- What advice would you give to aspiring data scientists

CM-Use open-source. R, Julia, Octave and the others are seeing a level of innovation that the commercial solutions just cannot match. They are very flexible and versatile, and if you need something specific, you should learn some Python and do it yourself.

Keep things reproducible, or at some point you will get lost. This includes a version control system.

Be active in the community. While books are great, sharing and seeking advice will improve your skills much faster.

Focus more on “why” you do something and “what” you want to achieve. Only then get technical about “how” you want to do it. Use a good IDE that facilitates your work and allows you to do the simple things fast. You know, like StatAce 🙂

AO- Describe your career journey from Student to CEO

CM-During my bachelor studies I worked as a software developer and customer intelligence analyst. This gave me a lot of perspective on software and data.

After graduating I got a job where I coordinated processes and led projects. This is where I discovered the importance of listening to customers, planning well in advance, and having good data to base decisions on.

In my master studies, it was my statistics-heavy thesis that made me think “why is there not a place where I can easily use the power of R on a machine with a lot of RAM?” This is when the idea for StatAce was born.

About StatAce-

Bulgarian StatAce is the winner of betapitch | global, which was held in Berlin on 6 July (read more about it here). The team, driven by the lack of software for low, student budgets, came up with the idea of building “Google docs for professional statisticians” and eventually took home the first prize of the startup competition.

28.635308

77.224960