Maybe the guys in Anonymous or Wikileaks can now use visual cryptography while using Snapchat to fool the NSA or CIA

Personally I think a browser with inbuilt backdoors to Tor Relays and data transfer by Bit Torrrents could be worthy a project too.

Quit the bullshit, Google- you are as evil as The Russian Communist Empire

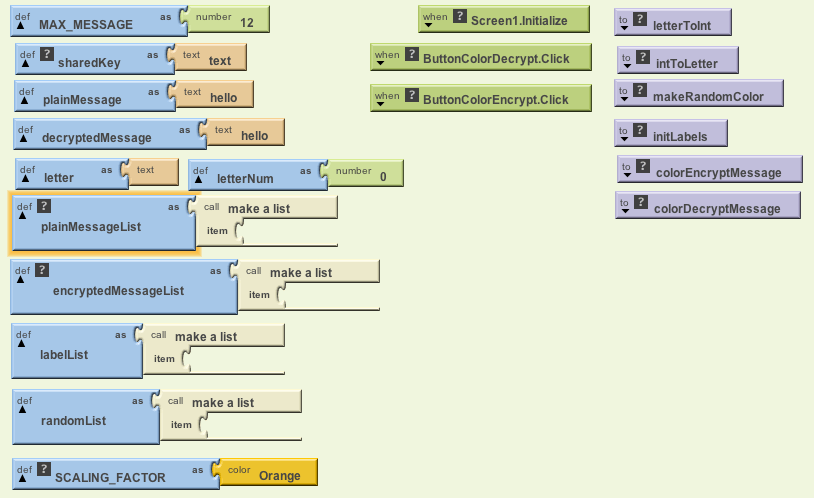

I was just reading up on my weekly to-read list and came across this interesting method. It is called Play Color Cipher-

Each Character ( Capital, Small letters, Numbers (0-9), Symbols on the keyboard ) in the plain text is substituted with a color block from the available 18 Decillions of colors in the world [11][12][13] and at the receiving end the cipher text block (in color) is decrypted in to plain text block. It overcomes the problems like “Meet in the middle attack, Birthday attack and Brute force attacks [1]”.

It also reduces the size of the plain text when it is encrypted in to cipher text by 4 times, with out any loss of content. Cipher text occupies very less buffer space; hence transmitting through channel is very fast. With this the transportation cost through channel comes down.

Reference-

http://www.ijcaonline.org/journal/number28/pxc387832.pdf

Visual Cryptography is indeed an interesting topic-

Visual cryptography, an emerging cryptography technology, uses the characteristics of human vision to decrypt encrypted

images. It needs neither cryptography knowledge nor complex computation. For security concerns, it also ensures that hackers

cannot perceive any clues about a secret image from individual cover images. Since Naor and Shamir proposed the basic

model of visual cryptography, researchers have published many related studies.

Visual cryptography (VC) schemes hide the secret image into two or more images which are called

shares. The secret image can be recovered simply by stacking the shares together without any complex

computation involved. The shares are very safe because separately they reveal nothing about the secret image.

Visual Cryptography provides one of the secure ways to transfer images on the Internet. The advantage

of visual cryptography is that it exploits human eyes to decrypt secret images .

ESPECIALLY SEE |THIS AND THIS

http://cacr.uwaterloo.ca/~dstinson/VCS-flag.html

and

http://cacr.uwaterloo.ca/~dstinson/VCS-pi.html

Even more fun—– visual cryptography using a series of bar codes – leaving the man in middle guessing how many sub images are there and which if at all is the real message

References-

Color Visual Cryptography Scheme Using Meaningful Shares

http://csis.bits-pilani.ac.in/faculty/murali/netsec-10/seminar/refs/muralikrishna4.pdf

Visual cryptography for color images

http://csis.bits-pilani.ac.in/faculty/murali/netsec-10/seminar/refs/muralikrishna3.pdf

Other Resources

- http://users.telenet.be/d.rijmenants/en/visualcrypto.htm

- Visual Crypto – One-time Image Create two secure images from one by Robert Hansen

- Visual Crypto Java Applet at the University of Regensburg

- Visual Cryptography Kit Software to create image layers

- On-line Visual Crypto Applet by Leemon Baird

- Extended Visual Cryptography (pdf) by Mizuho Nakajima and Yasushi Yamaguchi

- Visual Cryptography Paper by Moni Noar and Adi Shamir

- Visual Crypto Talk (pdf) by Frederik Vercauteren ESAT Leuven

- http://cacr.uwaterloo.ca/~dstinson/visual.html

- t the University of Salerno web page on visual cryptogrpahy.

- Visual Crypto Page by Doug Stinson

Constructions and Bounds for Visual Cryptography

Lecture Notes in Computer Science 1099 (1996), 416-428 (23rd International Colloquium on Automata, Languages and Programming).- Visual Cryptography for General Access Structures

Information and Computation 129 (1996), 86-106 (this paper is an expanded and revised version of the conference paper). - On the Contrast in Visual Cryptography Schemes

Journal of Cryptology 12 (1999), 261-289. - Extended Schemes for Visual Cryptography

Theoretical Computer Science 250 (2001), 143-161. - Threshold Visual Cryptography Schemes With Specified Whiteness Levels of Reconstructed Pixels

Designs, Codes and Cryptography 25 (2002), 15-61. - Contrast Optimal Threshold Visual Cryptography Schemes

SIAM J. on Discrete Math. 16 (2003), 224-261. - “Visual Cryptography: Seeing is Believing” availablehere,

- example- face http://cacr.uwaterloo.ca/~dstinson/VCS-happyface.html

- flag http://cacr.uwaterloo.ca/~dstinson/VCS-flag.html

- pi http://cacr.uwaterloo.ca/~dstinson/VCS-pi.html

- Simple implementation of the visual cryptography scheme based on Moni Naor and Adi Shamir, Visual Cryptography, EUROCRYPT 1994, pp1–12. This technique allows visual information like pictures to be encrypted so that decryption can be done visually.The code outputs two files. Try printing them on two separate transparencies and putting them one on top of the other to see the hidden message. http://algorito.com/algorithm/visual-cryptography

Visual Cryptography

- Moni Naor and Adi Shamir, Visual Cryptography , Eurocrypt 94. Postscript , gzipped Postscript

- Moni Naor and Adi Shamir, Visual Cryptography II , Cambridge Workshop on Protocols, 1996. Postscript, gzipped Postscript

- Moni Naor and Benny Pinkas, Visual Authentication , Crypto 97. Postscript, gzipped Postscript

—

Ajay- I think a combination of sharing and color ciphers would prove more helpful to secure Internet Communication than existing algorithms. It also levels the playing field from computationally rich players to creative coders.