Here is an interview with Dr Ingo Mierswa , CEO of Rapid -I and Dr Simon Fischer, Head R&D. Rapid-I makes the very popular software Rapid Miner – perhaps one of the earliest leading open source software in business analytics and business intelligence. It is quite easy to use, deploy and with it’s extensions and innovations (including compatibility with R )has continued to grow tremendously through the years.

In an extensive interview Ingo and Simon talk about algorithms marketplace, extensions , big data analytics, hadoop, mobile computing and use of the graphical user interface in analytics.

Special Thanks to Nadja from Rapid I communication team for helping coordinate this interview.( Statuary Blogging Disclosure- Rapid I is a marketing partner with Decisionstats as per the terms in https://decisionstats.com/privacy-3/)

Ajay- Describe your background in science. What are the key lessons that you have learnt while as scientific researcher and what advice would you give to new students today.

Ingo: My time as researcher really was a great experience which has influenced me a lot. I have worked at the AI lab of Prof. Dr. Katharina Morik, one of the persons who brought machine learning and data mining to Europe. Katharina always believed in what we are doing, encouraged us and gave us the space for trying out new things. Funnily enough, I never managed to use my own scientific results in any real-life project so far but I consider this as a quite common gap between science and the “real world”. At Rapid-I, however, we are still heavily connected to the scientific world and try to combine the best of both worlds: solving existing problems with leading-edge technologies.

Simon: In fact, during my academic career I have not worked in the field of data mining at all. I worked on a field some of my colleagues would probably even consider boring, and that is theoretical computer science. To be precise, my research was in the intersection of game theory and network theory. During that time, I have learnt a lot of exciting things, none of which had any business use. Still, I consider that a very valuable experience. When we at Rapid-I hire people coming to us right after graduating, I don’t care whether they know the latest technology with a fancy three-letter acronym – that will be forgotten more quickly than it came. What matters is the way you approach new problems and challenges. And that is also my recommendation to new students: work on whatever you like, as long as you are passionate about it and it brings you forward.

Ajay- How is the Rapid Miner Extensions marketplace moving along. Do you think there is a scope for people to say create algorithms in a platform like R , and then offer that algorithm as an app for sale just like iTunes or Android apps.

Simon: Well, of course it is not going to be exactly like iTunes or Android apps are, because of the more business-orientated character. But in fact there is a scope for that, yes. We have talked to several developers, e.g., at our user conference RCOMM, and several people would be interested in such an opportunity. Companies using data mining software need supported software packages, not just something they downloaded from some anonymous server, and that is only possible through a platform like the new Marketplace. Besides that, the marketplace will not only host commercial extensions. It is also meant to be a platform for all the developers that want to publish their extensions to a broader community and make them accessible in a comfortable way. Of course they could just place them on their personal Web pages, but who would find them there? From the Marketplace, they are installable with a single click.

Ingo: What I like most about the new Rapid-I Marketplace is the fact that people can now get something back for their efforts. Developing a new algorithm is a lot of work, in some cases even more that developing a nice app for your mobile phone. It is completely accepted that people buy apps from a store for a couple of Dollars and I foresee the same for sharing and selling algorithms instead of apps. Right now, people can already share algorithms and extensions for free, one of the next versions will also support selling of those contributions. Let’s see what’s happening next, maybe we will add the option to sell complete RapidMiner workflows or even some data pools…

Ajay- What are the recent features in Rapid Miner that support cloud computing, mobile computing and tablets. How do you think the landscape for Big Data (over 1 Tb ) is changing and how is Rapid Miner adapting to it.

Simon: These are areas we are very active in. For instance, we have an In-Database-Mining Extension that allows the user to run their modelling algorithms directly inside the database, without ever loading the data into memory. Using analytic databases like Vectorwise or Infobright, this technology can really boost performance. Our data mining server, RapidAnalytics, already offers functionality to send analysis processes into the cloud. In addition to that, we are currently preparing a research project dealing with data mining in the cloud. A second project is targeted towards the other aspect you mention: the use of mobile devices. This is certainly a growing market, of course not for designing and running analyses, but for inspecting reports and results. But even that is tricky: When you have a large screen you can display fancy and comprehensive interactive dashboards with drill downs and the like. On a mobile device, that does not work, so you must bring your reports and visualizations very much to the point. And this is precisely what data mining can do – and what is hard to do for classical BI.

Ingo: Then there is Radoop, which you may have heard of. It uses the Apache Hadoop framework for large-scale distributed computing to execute RapidMiner processes in the cloud. Radoop has been presented at this year’s RCOMM and people are really excited about the combination of RapidMiner with Hadoop and the scalability this brings.

Ajay- Describe the Rapid Miner analytics certification program and what steps are you taking to partner with academic universities.

Ingo: The Rapid-I Certification Program was created to recognize professional users of RapidMiner or RapidAnalytics. The idea is that certified users have demonstrated a deep understanding of the data analysis software solutions provided by Rapid-I and how they are used in data analysis projects. Taking part in the Rapid-I Certification Program offers a lot of benefits for IT professionals as well as for employers: professionals can demonstrate their skills and employers can make sure that they hire qualified professionals. We started our certification program only about 6 months ago and until now about 100 professionals have been certified so far.

Simon: During our annual user conference, the RCOMM, we have plenty of opportunities to talk to people from academia. We’re also present at other conferences, e.g. at ECML/PKDD, and we are sponsoring data mining challenges and grants. We maintain strong ties with several universities all over Europe and the world, which is something that I would not want to miss. We are also cooperating with institutes like the ITB in Dublin during their training programmes, e.g. by giving lectures, etc. Also, we are leading or participating in several national or EU-funded research projects, so we are still close to academia. And we offer an academic discount on all our products 🙂

Ajay- Describe the global efforts in making Rapid Miner a truly international software including spread of developers, clients and employees.

Simon: Our clients already are very international. We have a partner network in America, Asia, and Australia, and, while I am responding to these questions, we have a training course in the US. Developers working on the core of RapidMiner and RapidAnalytics, however, are likely to stay in Germany for the foreseeable future. We need specialists for that, and it would be pointless to spread the development team over the globe. That is also owed to the agile philosophy that we are following.

Ingo: Simon is right, Rapid-I already is acting on an international level. Rapid-I now has more than 300 customers from 39 countries in the world which is a great result for a young company like ours. We are of course very strong in Germany and also the rest of Europe, but also concentrate on more countries by means of our very successful partner network. Rapid-I continues to build this partner network and to recruit dynamic and knowledgeable partners and in the future. However, extending and acting globally is definitely part of our strategic roadmap.

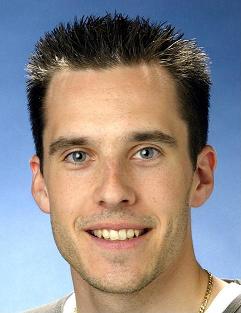

Dr. Ingo Mierswa is working as Chief Executive Officer (CEO) of Rapid-I. He has several years of experience in project management, human resources management, consulting, and leadership including eight years of coordinating and leading the multi-national RapidMiner developer team with about 30 developers and contributors world-wide. He wrote his Phd titled “Non-Convex and Multi-Objective Optimization for Numerical Feature Engineering and Data Mining” at the University of Dortmund under the supervision of Prof. Morik.

Dr. Simon Fischer is heading the research & development at Rapid-I. His interests include game theory and networks, the theory of evolutionary algorithms (e.g. on the Ising model), and theoretical and practical aspects of data mining. He wrote his PhD in Aachen where he worked in the project “Design and Analysis of Self-Regulating Protocols for Spectrum Assignment” within the excellence cluster UMIC. Before, he was working on the vtraffic project within the DFG Programme 1126 “Algorithms for large and complex networks”.

http://rapid-i.com/content/view/181/190/ tells you more on the various types of Rapid Miner licensing for enterprise, individual and developer versions.

(Note from Ajay- to receive an early edition invite to Radoop, click here http://radoop.eu/z1sxe)