I recently found an interesting example of a website that both makes a lot of money and yet is much more efficient than any free or non profit. It is called ECOSIA

If you see a website that wants to balance administrative costs plus have a transparent way to make the world better- this is a great example.

You search with Ecosia.

Key facts about the park:

- World’s largest tropical forest reserve (38,867 square kilometers, or about the size of Switzerland)

- Home to about 14% of all amphibian species and roughly 54% of all bird species in the Amazon – not to mention large populations of at least eight threatened species, including the jaguar

- Includes part of the Guiana Shield containing 25% of world’s remaining tropical rainforests – 80 to 90% of which are still pristine

- Holds the last major unpolluted water reserves in the Neotropics, containing approximately 20% of all of the Earth’s water

- One of the last tropical regions on Earth vastly unaltered by humans

- Significant contributor to climatic regulation via heat absorption and carbon storage

http://ecosia.org/statistics.php

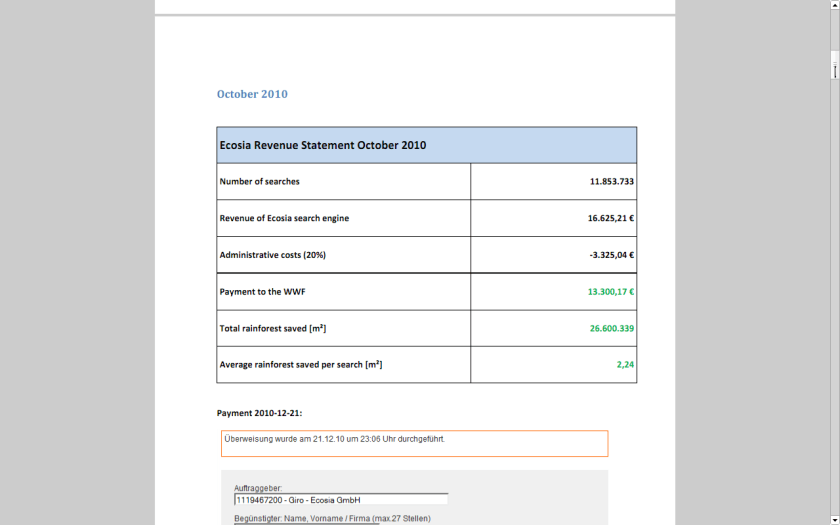

They claim to have donated 141,529.42 EUR !!!

http://static.ecosia.org/files/donations.pdf

Well suppose you are the Web Admin of a very popular website like Wikipedia or etc

One way to meet server costs is to say openly hey i need to balance my costs so i need some money.

The other way is to use online advertising.

I started mine with Google Adsense.

Click per milli (or CPM) gives you a very low low conversion compared to contacting ad sponsor directly.

But its a great data experiment-

as you can monitor which companies are likely to be advertised on your site (assume google knows more about their algols than you will)

which formats -banner or text or flash have what kind of conversion rates

what are the expected pay off rates from various keywords or companies (like business intelligence software, predictive analytics software and statistical computing software are similar but have different expected returns (if you remember your eco class)

NOW- Based on above data, you know whats your minimum baseline to expect from a private advertiser than a public, crowd sourced search engine one (like Google or Bing)

Lets say if you have 100000 views monthly. and assume one out of 1000 page views will lead to a click. Say the advertiser will pay you 1 $ for every 1 click (=1000 impressions)

Then your expected revenue is $100.But if your clicks are priced at 2.5$ for every click , and your click through rate is now 3 out of 1000 impressions- (both very moderate increases that can done by basic placement optimization of ad type, graphics etc)-your new revenue is 750$.

Be a good Samaritan- you decide to share some of this with your audience -like 4 Amazon books per month ( or I free Amazon book per week)- That gives you a cost of 200$, and leaves you with some 550$.

Wait! it doesnt end there- Adam Smith‘s invisible hand moves on .

You say hmm let me put 100 $ for an annual paper writing contest of $1000, donate $200 to one laptop per child ( or to Amazon rain forests or to Haiti etc etc etc), pay $100 to your upgraded server hosting, and put 350$ in online advertising. say $200 for search engines and $150 for Facebook.

Woah!

Month 1 would should see more people visiting you for the first time. If you have a good return rate (returning visitors as a %, and low bounce rate (visits less than 5 secs)- your traffic should see atleast a 20% jump in new arrivals and 5-10 % in long term arrivals. Ignoring bounces- within three months you will have one of the following

1) An interesting case study on statistics on online and social media advertising, tangible motivations for increasing community response , and some good data for study

2) hopefully better cost management of your server expenses

3)very hopefully a positive cash flow

you could even set a percentage and share the monthly (or annually is better actions) to your readers and advertisers.

go ahead- change the world!

the key paradigms here are sharing your traffic and revenue openly to everyone

donating to a suitable cause

helping increase awareness of the suitable cause

basing fixed percentages rather than absolute numbers to ensure your site and cause are sustained for years.

Related Articles

- 3 Green Search Engines (planetsave.com)

- Social Enterprise Focus: Ecosia (clearlyso.com)

- Yahoo and Microsoft Search Advertisers May See Rate Hike of Up To 78% (dailyfinance.com)

- Return on Investment from Google Marketing (firstrate.co.nz)

- The Top 10 Paid Search Features You Might Have Missed In 2010 (searchengineland.com)

- Bing upgrades draw upon Facebook, other partners (thenewstribune.com)

- adCenter Goes Offline During Winter Storm (seroundtable.com)

- Why Bing “Likes” Facebook (technologyreview.in)

- What Offline Advertisers Can Teach Online Marketers (gabrielcatalano.com)

- The Environment friendly Search! (trak.in)

(184) – 44,284 users – Weekly installs: 24,086

(184) – 44,284 users – Weekly installs: 24,086

Features of web applications created by Rwui

Features of web applications created by Rwui