I noticed the brouaha on Google’s privacy policy. I am afraid that social networks capture much more private information than search engines (even if they integrate my browser history, my social network, my emails, my search engine keywords) – I am still okay. All they are going to do is sell me better ads (maybe than just flood me with ads hoping to get a click). Of course Microsoft should take it one step forward and capture data from my desktop as well for better ads, that would really complete the curve. In any case , with the Patriot Act, most information is available to the Government anyway.

But it does make sense to have an easier to understand privacy policy, and one of my disappointments is the complete lack of visual appeal in such notices. Make things simple as possible, but no simpler, as Al-E said.

Privacy activists forget that ads run on models built on AGGREGATED data, and most models are scored automatically. Unless you do something really weird and fake like, chances are the data pertaining to you gets automatically collected, algorithmic-ally aggregated, then modeled and scored, and a corresponding ad to your score, or segment is shown to you. Probably no human eyes see raw data (but big G can clarify that)

( I also noticed Google gets a lot of free advice from bloggers. hey, if you were really good at giving advice to Google- they WILL hire you !)

on to another tool based (than legalese based approach to privacy)

I noticed tools like DNSCrypt increase internet security, so that all my integrated data goes straight to people I am okay with having it (ad sellers not governments!)

Unfortunately it is Mac Only, and I will wait for Windows or X based tools for a better review. I noticed some lag in updating these tools , so I can only guess that the boys of Baltimore have been there, so it is best used for home users alone.

Maybe they can find a chrome extension for DNS dummies.

http://www.opendns.com/technology/dnscrypt/

Why DNSCrypt is so significant

In the same way the SSL turns HTTP web traffic into HTTPS encrypted Web traffic, DNSCrypt turns regular DNS traffic into encrypted DNS traffic that is secure from eavesdropping and man-in-the-middle attacks. It doesn’t require any changes to domain names or how they work, it simply provides a method for securely encrypting communication between our customers and our DNS servers in our data centers. We know that claims alone don’t work in the security world, however, so we’ve opened up the source to our DNSCrypt code base and it’s available onGitHub.

DNSCrypt has the potential to be the most impactful advancement in Internet security since SSL, significantly improving every single Internet user’s online security and privacy.

and

http://dnscurve.org/crypto.html

The DNSCurve project adds link-level public-key protection to DNS packets. This page discusses the cryptographic tools used in DNSCurve.

Elliptic-curve cryptography

DNSCurve uses elliptic-curve cryptography, not RSA.

RSA is somewhat older than elliptic-curve cryptography: RSA was introduced in 1977, while elliptic-curve cryptography was introduced in 1985. However, RSA has shown many more weaknesses than elliptic-curve cryptography. RSA’s effective security level was dramatically reduced by the linear sieve in the late 1970s, by the quadratic sieve and ECM in the 1980s, and by the number-field sieve in the 1990s. For comparison, a few attacks have been developed against some rare elliptic curves having special algebraic structures, and the amount of computer power available to attackers has predictably increased, but typical elliptic curves require just as much computer power to break today as they required twenty years ago.

IEEE P1363 standardized elliptic-curve cryptography in the late 1990s, including a stringent list of security criteria for elliptic curves. NIST used the IEEE P1363 criteria to select fifteen specific elliptic curves at five different security levels. In 2005, NSA issued a new “Suite B” standard, recommending the NIST elliptic curves (at two specific security levels) for all public-key cryptography and withdrawing previous recommendations of RSA.

Some specific types of elliptic-curve cryptography are patented, but DNSCurve does not use any of those types of elliptic-curve cryptography.

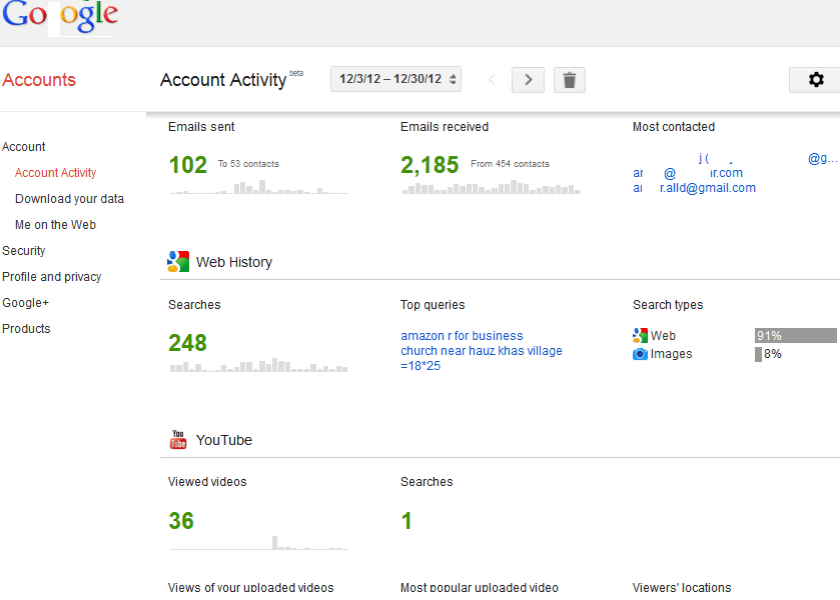

Notice the use of Bigger Font for overall number of emails as well as smaller bar plots- I would say they are almost spark lines or spark bar plots if you excuse my Tufte.

Notice the use of Bigger Font for overall number of emails as well as smaller bar plots- I would say they are almost spark lines or spark bar plots if you excuse my Tufte.