I use Windows 7 on my laptop (it came pre-installed) and Ubuntu using the VMWare Player. What are the advantages of using VM Player instead of creating a dual-boot system? Well I can quickly shift from Ubuntu to Windows and bakc again without restarting my computer everytime. Using this approach allows me to utilize software that run only on Windows and run software like Rattle, the R data mining GUI, that are much easier installed on Linux.

However if your statistical software is on your Virtual Disk , and your data is on your Windows disk, you need a way to move data from Windows to Ubuntu.

The solution to this as per Ubuntu forums is –http://communities.vmware.com/thread/55242

Open My Computer, browse to the folder you want to share. Right-click on the folder, select Properties. Sharing tab. Select the radio button to “Share this Folder”. Change the default generated name if you wish; add a description if you wish. Click the Permissions button to modify the security settings of what users can read/write to the share.

On the Linux side, it depends on the distro, the shell, and the window manager.

Well Ubuntu makes it really easy to configure the Linux steps to move data within Windows and Linux partitions.

NEW UPDATE-

VMmare makes it easy to share between your Windows (host) and Linux (guest) OS

Step 1

and step 2

Do this

and

Start the Wizard

when you finish the wizard and share a drive or folder- hey where do I see my shared ones-

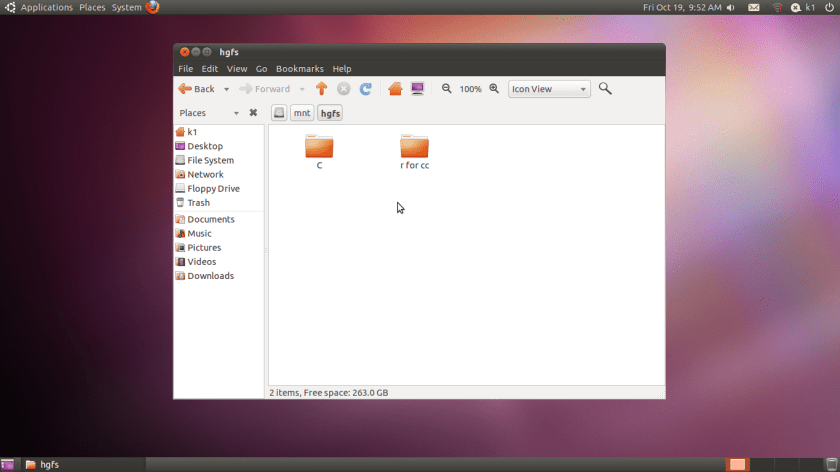

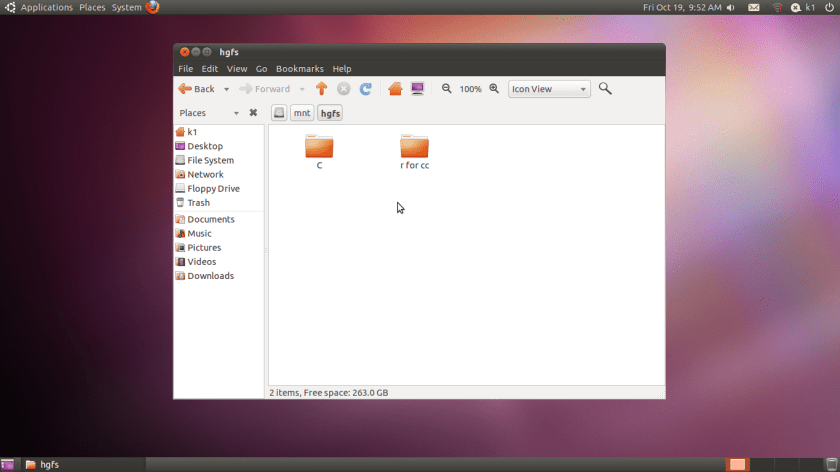

see this folder in Linux- /mnt/hgfs (bingo!)

Hacker HW – Make this folder //mnt/hgfs a shortcut in Places your Ubuntu startup

Hacker Hw 2-

Upload using an anon email your VM dark data to Ubuntu one

Delete VM

Purge using software XX

Reinstall VM and bring back backup

Note time to do this

-General Sharing in Windows

Just open the Network tab in Ubuntu- see screenshots below-

Windows will now ask your Ubuntu user for login-

Once Logged in Windows from within Ubuntu Vmware, this is what happens

You see a tab called “users on “windows username”- pc appear on your Ubuntu Desktop (see top right of the screenshot)

If you double click it- you see your windows path

You can now just click and drag data between your windows and linux partitions , just the way you do it in Windows .

So based on this- if you want to build decision trees, artifical neural networks, regression models, and even time series models for zero capital expenditure- you can use both Ubuntu/R without compromising on your IT policy of Windows only in your organization (there is a shortage of Ubuntu trained IT administrators in the enterprise world)

Revised Installation Procedure for utilizing both Ubuntu /R/Rattle data mining on your Windows PC.

Using VMWare to build a free data mining system in R, as well as isolate your analytics system (thus using both Linux and Windows without overburdening your machine)

First Time

- http://downloads.vmware.com/d/info/desktop_end_user_computing/vmware_player/4_0Download and Install

- http://www.ubuntu.com/download/ubuntu/downloadDownload Only

- Create New Virtual Image in VM Ware Player

- Applications—–Terminal——sudo apt get-install R (to download and install)

- sudo R (to open R)

- Once R is opened type this —-install.packages(rattle)—– This will install rattle

- library(rattle) will load Rattle—–

- rattle() will open the GUI—-

Getting Data from Host to Guest VM

Next Time

- Go to VM Player

- Open the VM

- sudo R in terminal to bring up R

- library(rattle) within R

- rattle()

28.635308

77.224960