For automated report delivery I have often used send email options in BASE SAS. For R, for scheduling tasks and sending me automated mails on completion of tasks I have two R options and 1 Windows OS scheduling option. Note red font denotes the parameters that should be changed. Anything else should NOT be changed.

Option 1-

Use the mail package at

http://cran.r-project.org/web/packages/mail/mail.pdf

> library(mail)

Attaching package: ‘mail’

The following object(s) are masked from ‘package:sendmailR’:

sendmail

>

> sendmail(“ohri2007@gmail.com“, subject=”Notification from R“,message=“Calculation finished!”, password=”rmail”)

[1] “Message was sent to ohri2007@gmail.com! You have 19 messages left.”

Disadvantage- Only 20 email messages by IP address per day. (but thats ok!)

Option 2-

use sendmailR package at http://cran.r-project.org/web/packages/sendmailR/sendmailR.pdf

install.packages()

library(sendmailR)

from <- sprintf(“<sendmailR@%s>”, Sys.info()[4])

to <- “<ohri2007@gmail.com>”

subject <- “Hello from R”

body <- list(“It works!”, mime_part(iris))

sendmail(from, to, subject, body,control=list(smtpServer=”ASPMX.L.GOOGLE.COM”))

BiocInstaller version 1.2.1, ?biocLite for help

> install.packages(“sendmailR”)

Installing package(s) into ‘/home/ubuntu/R/library’

(as ‘lib’ is unspecified)

also installing the dependency ‘base64’trying URL ‘http://cran.at.r-project.org/src/contrib/base64_1.1.tar.gz’

Content type ‘application/x-gzip’ length 61109 bytes (59 Kb)

opened URL

==================================================

downloaded 59 Kbtrying URL ‘http://cran.at.r-project.org/src/contrib/sendmailR_1.1-1.tar.gz’

Content type ‘application/x-gzip’ length 6399 bytes

opened URL

==================================================

downloaded 6399 bytesBiocInstaller version 1.2.1, ?biocLite for help

* installing *source* package ‘base64’ …

** package ‘base64’ successfully unpacked and MD5 sums checked

** libs

gcc -std=gnu99 -I/usr/local/lib64/R/include -I/usr/local/include -fpic -g -O2 -c base64.c -o base64.o

gcc -std=gnu99 -shared -L/usr/local/lib64 -o base64.so base64.o -L/usr/local/lib64/R/lib -lR

installing to /home/ubuntu/R/library/base64/libs

** R

** preparing package for lazy loading

** help

*** installing help indices

** building package indices …

** testing if installed package can be loaded

BiocInstaller version 1.2.1, ?biocLite for help* DONE (base64)

BiocInstaller version 1.2.1, ?biocLite for help

* installing *source* package ‘sendmailR’ …

** package ‘sendmailR’ successfully unpacked and MD5 sums checked

** R

** preparing package for lazy loading

** help

*** installing help indices

** building package indices …

** testing if installed package can be loaded

BiocInstaller version 1.2.1, ?biocLite for help* DONE (sendmailR)

The downloaded packages are in

‘/tmp/RtmpsM222s/downloaded_packages’

> library(sendmailR)

Loading required package: base64

> from <- sprintf(“<sendmailR@%s>”, Sys.info()[4])

> to <- “<ohri2007@gmail.com>”

> subject <- “Hello from R”

> body <- list(“It works!”, mime_part(iris))

> sendmail(from, to, subject, body,

+ control=list(smtpServer=”ASPMX.L.GOOGLE.COM”))

$code

[1] “221”$msg

[1] “2.0.0 closing connection ff2si17226764qab.40”

Disadvantage-This worked when I used the Amazon Cloud using the BioConductor AMI (for free 2 hours) at http://www.bioconductor.org/help/cloud/

It did NOT work when I tried it use it from my Windows 7 Home Premium PC from my Indian ISP (!!) .

It gave me this error

or in wait_for(250) :

SMTP Error: 5.7.1 [180.215.172.252] The IP you’re using to send mail is not authorized

PAUSE–

ps Why do this (send email by R)?

Note you can add either of the two programs of the end of the code that you want to be notified automatically. (like daily tasks)

This is mostly done for repeated business analytics tasks (like reports and analysis that need to be run at specific periods of time)

pps- What else can I do with this?

Can be modified to include sms or tweets or even blog by email by modifying the “to” location appropriately.

3) Using Windows Task Scheduler to run R codes automatically (either the above)

or just sending an email

got to Start> All Programs > Accessories >System Tools > Task Scheduler ( or by default C:Windowssystem32taskschd.msc)

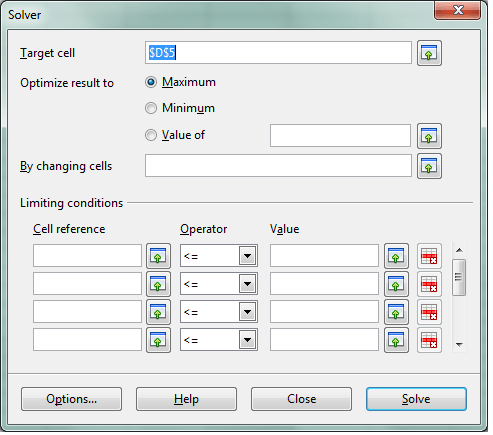

Create a basic task

Now you can use this to run your daily/or scheduled R code or you can send yourself email as well.

and modify the parameters- note the SMTP server (you can use the ones for google in example 2 at ASPMX.L.GOOGLE.COM)

and check if it works!

Related

Geeky Things , Bro

–

Configuring IIS on your Windows 7 Home Edition-

note path to do this is-

Control Panel>All Control Panel Items> Program and Features>Turn Windows features on or off> Internet Information Services

and

http://stackoverflow.com/questions/709635/sending-mail-from-batch-file