Here is a short list of resources and material I put together as starting points for R and Cloud Computing It’s a bit messy but overall should serve quite comprehensively.

Cloud computing is a commonly used expression to imply a generational change in computing from desktop-servers to remote and massive computing connections,shared computers, enabled by high bandwidth across the internet.

As per the National Institute of Standards and Technology Definition,

Cloud computing is a model for enabling convenient, on-demand network access to a shared pool of configurable computing resources (e.g., networks, servers, storage, applications, and services) that can be rapidly provisioned and released with minimal management effort or service provider interaction.

(Citation: The NIST Definition of Cloud Computing

Authors: Peter Mell and Tim Grance

Version 15, 10-7-09

National Institute of Standards and Technology, Information Technology Laboratory

http://csrc.nist.gov/groups/SNS/cloud-computing/cloud-def-v15.doc)

R is an integrated suite of software facilities for data manipulation, calculation and graphical display.

From http://cran.r-project.org/doc/FAQ/R-FAQ.html#R-Web-Interfaces

R Web Interfaces

Rweb is developed and maintained by Jeff Banfield. The Rweb Home Page provides access to all three versions of Rweb—a simple text entry form that returns output and graphs, a more sophisticated JavaScript version that provides a multiple window environment, and a set of point and click modules that are useful for introductory statistics courses and require no knowledge of the R language. All of the Rweb versions can analyze Web accessible datasets if a URL is provided.

The paper “Rweb: Web-based Statistical Analysis”, providing a detailed explanation of the different versions of Rweb and an overview of how Rweb works, was published in the Journal of Statistical Software (http://www.jstatsoft.org/v04/i01/).

Ulf Bartel has developed R-Online, a simple on-line programming environment for R which intends to make the first steps in statistical programming with R (especially with time series) as easy as possible. There is no need for a local installation since the only requirement for the user is a JavaScript capable browser. See http://osvisions.com/r-online/ for more information.

Rcgi is a CGI WWW interface to R by MJ Ray. It had the ability to use “embedded code”: you could mix user input and code, allowing the HTMLauthor to do anything from load in data sets to enter most of the commands for users without writing CGI scripts. Graphical output was possible in PostScript or GIF formats and the executed code was presented to the user for revision. However, it is not clear if the project is still active.

Currently, a modified version of Rcgi by Mai Zhou (actually, two versions: one with (bitmap) graphics and one without) as well as the original code are available from http://www.ms.uky.edu/~statweb/.

CGI-based web access to R is also provided at http://hermes.sdu.dk/cgi-bin/go/. There are many additional examples of web interfaces to R which basically allow to submit R code to a remote server, see for example the collection of links available from http://biostat.mc.vanderbilt.edu/twiki/bin/view/Main/StatCompCourse.

David Firth has written CGIwithR, an R add-on package available from CRAN. It provides some simple extensions to R to facilitate running R scripts through the CGI interface to a web server, and allows submission of data using both GET and POST methods. It is easily installed using Apache under Linux and in principle should run on any platform that supports R and a web server provided that the installer has the necessary security permissions. David’s paper “CGIwithR: Facilities for Processing Web Forms Using R” was published in the Journal of Statistical Software (http://www.jstatsoft.org/v08/i10/). The package is now maintained by Duncan Temple Lang and has a web page athttp://www.omegahat.org/CGIwithR/.

Rpad, developed and actively maintained by Tom Short, provides a sophisticated environment which combines some of the features of the previous approaches with quite a bit of JavaScript, allowing for a GUI-like behavior (with sortable tables, clickable graphics, editable output), etc.

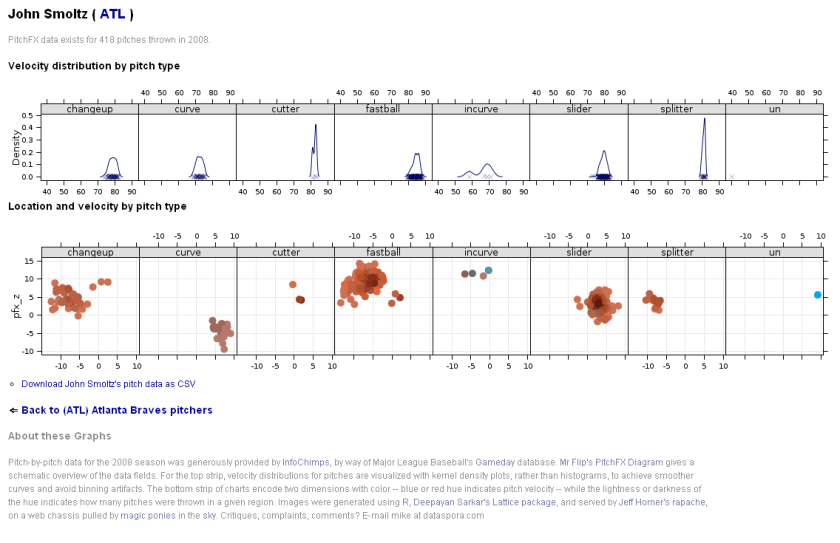

Jeff Horner is working on the R/Apache Integration Project which embeds the R interpreter inside Apache 2 (and beyond). A tutorial and presentation are available from the project web page at http://biostat.mc.vanderbilt.edu/twiki/bin/view/Main/RApacheProject.

Rserve is a project actively developed by Simon Urbanek. It implements a TCP/IP server which allows other programs to use facilities of R. Clients are available from the web site for Java and C++ (and could be written for other languages that support TCP/IP sockets).

OpenStatServer is being developed by a team lead by Greg Warnes; it aims “to provide clean access to computational modules defined in a variety of computational environments (R, SAS, Matlab, etc) via a single well-defined client interface” and to turn computational services into web services.

Two projects use PHP to provide a web interface to R. R_PHP_Online by Steve Chen (though it is unclear if this project is still active) is somewhat similar to the above Rcgi and Rweb. R-php is actively developed by Alfredo Pontillo and Angelo Mineo and provides both a web interface to R and a set of pre-specified analyses that need no R code input.

webbioc is “an integrated web interface for doing microarray analysis using several of the Bioconductor packages” and is designed to be installed at local sites as a shared computing resource.

Rwui is a web application to create user-friendly web interfaces for R scripts. All code for the web interface is created automatically. There is no need for the user to do any extra scripting or learn any new scripting techniques. Rwui can also be found at http://rwui.cryst.bbk.ac.uk.

Finally, the R.rsp package by Henrik Bengtsson introduces “R Server Pages”. Analogous to Java Server Pages, an R server page is typically HTMLwith embedded R code that gets evaluated when the page is requested. The package includes an internal cross-platform HTTP server implemented in Tcl, so provides a good framework for including web-based user interfaces in packages. The approach is similar to the use of the brew package withRapache with the advantage of cross-platform support and easy installation.

Also additional R Cloud Computing Use Cases

http://wwwdev.ebi.ac.uk/Tools/rcloud/

ArrayExpress R/Bioconductor Workbench

Remote access to R/Bioconductor on EBI’s 64-bit Linux ClusterStart the workbench by downloading the package for your operating system (Macintosh or Windows), or via Java Web Start, and you will get access to an instance of R running on one of EBI’s powerful machines. You can install additional packages, upload your own data, work with graphics and collaborate with colleagues, all as if you are running R locally, but unlimited by your machine’s memory, processor or data storage capacity.

|

Using R Google Docs

http://www.omegahat.org/RGoogleDocs/run.pdf

It uses the XML and RCurl packages and illustrates that it is relatively quick and easy

to use their primitives to interact with Web services.

Using R with Amazon

Citation

http://rgrossman.com/2009/05/17/running-r-on-amazons-ec2/

Amazon’s EC2 is a type of cloud that provides on demand computing infrastructures called an Amazon Machine Images or AMIs. In general, these types of cloud provide several benefits:

- Simple and convenient to use. An AMI contains your applications, libraries, data and all associated configuration settings. You simply access it. You don’t need to configure it. This applies not only to applications like R, but also can include any third-party data that you require.

- On-demand availability. AMIs are available over the Internet whenever you need them. You can configure the AMIs yourself without involving the service provider. You don’t need to order any hardware and set it up.

- Elastic access. With elastic access, you can rapidly provision and access the additional resources you need. Again, no human intervention from the service provider is required. This type of elastic capacity can be used to handle surge requirements when you might need many machines for a short time in order to complete a computation.

- Pay per use. The cost of 1 AMI for 100 hours and 100 AMI for 1 hour is the same. With pay per use pricing, which is sometimes called utility pricing, you simply pay for the resources that you use.

Connecting to R on Amazon EC2- Detailed tutorials

Ubuntu Linux version

https://decisionstats.com/2010/09/25/running-r-on-amazon-ec2/

and Windows R version

https://decisionstats.com/2010/10/02/running-r-on-amazon-ec2-windows/

Connecting R to Data on Google Storage and Computing on Google Prediction API

https://github.com/onertipaday/predictionapirwrapper

R wrapper for working with Google Prediction API

This package consists in a bunch of functions allowing the user to test Google Prediction API from R.

It requires the user to have access to both Google Storage for Developers and Google Prediction API:

see http://code.google.com/apis/storage/ and http://code.google.com/apis/predict/ for details.

Example usage:

#This example requires you had previously created a bucket named data_language on your Google Storage and you had uploaded a CSV file named language_id.txt (your data) into this bucket – see for details

library(predictionapirwrapper)

and Elastic R for Cloud Computing

http://user2010.org/tutorials/Chine.html

Abstract

Elastic-R is a new portal built using the Biocep-R platform. It enables statisticians, computational scientists, financial analysts, educators and students to use cloud resources seamlessly; to work with R engines and use their full capabilities from within simple browsers; to collaborate, share and reuse functions, algorithms, user interfaces, R sessions, servers; and to perform elastic distributed computing with any number of virtual machines to solve computationally intensive problems.

Also see Karim Chine’s http://biocep-distrib.r-forge.r-project.org/

R for Salesforce.com

At the point of writing this, there seem to be zero R based apps on Salesforce.com This could be a big opportunity for developers as both Apex and R have similar structures Developers could write free code in R and charge for their translated version in Apex on Salesforce.com

Force.com and Salesforce have many (1009) apps at

http://sites.force.com/appexchange/home for cloud computing for

businesses, but very few forecasting and statistical simulation apps.

Example of Monte Carlo based app is here

http://sites.force.com/appexchange/listingDetail?listingId=a0N300000016cT9EAI#

These are like iPhone apps except meant for business purposes (I am

unaware if any university is offering salesforce.com integration

though google apps and amazon related research seems to be on)

Force.com uses a language called Apex and you can see

http://wiki.developerforce.com/index.php/App_Logic and

http://wiki.developerforce.com/index.php/An_Introduction_to_Formulas

Apex is similar to R in that is OOPs

SAS Institute has an existing product for taking in Salesforce.com data.

A new SAS data surveyor is

available to access data from the Customer Relationship Management

(CRM) software vendor Salesforce.com. at

http://support.sas.com/documentation/cdl/en/whatsnew/62580/HTML/default/viewer.htm#datasurveyorwhatsnew902.htm)

Personal Note-Mentioning SAS in an email to a R list is a big no-no in terms of getting a response and love. Same for being careless about which R help list to email (like R devel or R packages or R help)

For python based cloud see http://pi-cloud.com