BORN IN THE USA

Here is an interview with Zach Goldberg, who is the product manager of Google Prediction API, the next generation machine learning analytics-as-an-api service state of the art cloud computing model building browser app.

Ajay- Describe your journey in science and technology from high school to your current job at Google.

Zach- First, thanks so much for the opportunity to do this interview Ajay! My personal journey started in college where I worked at a startup named Invite Media. From there I transferred to the Associate Product Manager (APM) program at Google. The APM program is a two year rotational program. I did my first year working in display advertising. After that I rotated to work on the Prediction API.

Ajay- How does the Google Prediction API help an average business analytics customer who is already using enterprise software , servers to generate his business forecasts. How does Google Prediction API fit in or complement other APIs in the Google API suite.

Zach- The Google Prediction API is a cloud based machine learning API. We offer the ability for anybody to sign up and within a few minutes have their data uploaded to the cloud, a model built and an API to make predictions from anywhere. Traditionally the task of implementing predictive analytics inside an application required a fair amount of domain knowledge; you had to know a fair bit about machine learning to make it work. With the Google Prediction API you only need to know how to use an online REST API to get started.

You can learn more about how we help businesses by watching our video and going to our project website.

Ajay- What are the additional use cases of Google Prediction API that you think traditional enterprise software in business analytics ignore, or are not so strong on. What use cases would you suggest NOT using Google Prediction API for an enterprise.

Zach- We are living in a world that is changing rapidly thanks to technology. Storing, accessing, and managing information is much easier and more affordable than it was even a few years ago. That creates exciting opportunities for companies, and we hope the Prediction API will help them derive value from their data.

The Prediction API focuses on providing predictive solutions to two types of problems: regression and classification. Businesses facing problems where there is sufficient data to describe an underlying pattern in either of these two areas can expect to derive value from using the Prediction API.

Ajay- What are your separate incentives to teach about Google APIs to academic or researchers in universities globally.

Zach- I’d refer you to our university relations page–

Google thrives on academic curiosity. While we do significant in-house research and engineering, we also maintain strong relations with leading academic institutions world-wide pursuing research in areas of common interest. As part of our mission to build the most advanced and usable methods for information access, we support university research, technological innovation and the teaching and learning experience through a variety of programs.

Ajay- What is the biggest challenge you face while communicating about Google Prediction API to traditional users of enterprise software.

Zach- Businesses often expect that implementing predictive analytics is going to be very expensive and require a lot of resources. Many have already begun investing heavily in this area. Quite often we’re faced with surprise, and even skepticism, when they see the simplicity of the Google Prediction API. We work really hard to provide a very powerful solution and take care of the complexity of building high quality models behind the scenes so businesses can focus more on building their business and less on machine learning.

Some ways to test and use cloud computing for free for yourself-

The folks at Microsoft Azure announced a 90 day free trial Continue reading “Cloud Computing by Windows , Amazon and Google for free”

What is Cassandra? Why is this relevant to analytics?

It is the next generation Database that you want your analytics software to be compatible with. Also it is quite easy to learn. Did I mention that if you say “I know how to Hadoop/Big Data” on your resume, you just raised your market price by an extra 30 K$. I mean there is a big demand for analysts and statisticians who can think/slice data from a business perspective AND write that HADOOP/Big Data code.

How do I learn more?

http://www.datastax.com/events/cassandrasf2011

Whats in it for you?

Well, I shifted my poetry to https://poemsforkush.wordpress.com/

On Decisionstats.com This is what I love to write about! I find it cool.

——————————————————–

It’s been almost a year since the first Apache Cassandra Summit in San Francisco. Once again we’ve reserved the beautiful Mission Bay Conference Center. Because the Cassandra community has grown so much in the last year, we’re taking the entire venue. This year’s event will not only include Cassandra, but also Brisk, Apache Hadoop, and more.

We have two rooms set aside for presentations.This year we also have multiple rooms set aside for Birds of a Feather talks, committer meetups, and other small discussions.

We’ve sent out surveys to all the attendees of last year’s conference, as well as a few hundred other members of the community. Below are some of the topics people have requested so far.

If you have topics you’d like to see covered, or you would like to submit a presentation, send a note to lynnbender@datastax.com.

We’ll be providing lunch as well as continuous beverage service — so that you won’t have to take your mind outside the information windtunnel.

We’ll also be hosting a post event party. Details coming shortly.

Submissions and suggestions: If you wish to propose a talk or presentation, or have a suggestion on a topic you’d like to see covered, send a note to Lynn Bender at lynnbender@datastax.com

Sponsorship opportunities: Contact Michael Weir at DataStax: mweir@datastax.com

Apache Cassandra, Cassandra, Apache Hadoop, Hadoop, and Apache are either registered trademarks or trademarks of the Apache Software Foundation in the United States and/or other countries, and are used with permission as of 2011. The Apache Software Foundation has no affiliation with and does not endorse, or review the materials provided at this event, which is managed by DataStax.

The Apache Cassandra Project develops a highly scalable second-generation distributed database, bringing together Dynamo’s fully distributed design and Bigtable’s ColumnFamily-based data model.

Cassandra was open sourced by Facebook in 2008, and is now developed by Apache committers and contributors from many companies.

However this is what Phil Rack the reseller is quoting on http://www.minequest.com/Pricing.html

Windows Desktop Price: $884 on 32-bit Windows and $1,149 on 64-bit Windows.

The Bridge to R is available on the Windows platforms and is available for free to customers who

license WPS through MineQuest,LLC. Companies and organizations outside of North America

may purchase a license for the Bridge to R which starts at $199 per desktop or $599 per serverWindows Server Price: $1,903 per logical CPU for 32-bit and $2,474 for 64-bit.

Note that Linux server versions are available but do not yet support the Eclipse IDE and are

command line only

WPS sure seems going well-but their pricing is no longer fixed and on the home website, you gotta fill a form. Ditt0 for the 30 day free evaluation

http://www.teamwpc.co.uk/products/wps/modules/core

The table below provides a summary of data formats presently supported by the WPS Core module.

| Data File Format | Un-Compressed Data |

Compressed Data |

||

|---|---|---|---|---|

| Read | Write | Read | Write | |

| SD2 (SAS version 6 data set) |  |

|

||

| SAS7BDAT (SAS version 7 data set) |  |

|

|

|

| SAS7BDAT (SAS version 8 data set) |  |

|

|

|

| SAS7BDAT (SAS version 9 data set) |  |

|

|

|

| SASSEQ (SAS version 8/9 sequential file) |  |

|

|

|

| V8SEQ (SAS version 8 sequential file) |  |

|

|

|

| V9SEQ (SAS version 9 sequential file) |  |

|

|

|

| WPD (WPS native data set) |  |

|

|

|

| WPDSEQ (WPS native sequential file) |  |

|

||

| XPORT (transport format) |  |

|

||

Additional access to EXCEL, SPSS and dBASE files is supported by utilising the WPS Engine for DB Filesmodule.

and they have a new product release on Valentine Day 2011 (oh these Europeans!)

From the press release at http://www.teamwpc.co.uk/press/wps2_5_1_released

WPS Version 2.5.1 Released

New language support, new data engines, larger datasets, improved scalabilityLONDON, UK – 14 February 2011 – World Programming today released version 2.5.1 of their WPS software for workstations, servers and mainframes.

WPS is a competitively priced, high performance, highly scalable data processing and analytics software product that allows users to execute programs written in the language of SAS. WPS is supported on a wide variety of hardware and operating system platforms and can connect to and work with many types of data with ease. The WPS user interface (Workbench) is frequently praised for its ease of use and flexibility, with the option to include numerous third-party extensions.

This latest version of the software has the ability to manipulate even greater volumes of data, removing the previous 2^31 (2 billion) limit on number of observations.

Complimenting extended data processing capabilities, World Programming has worked hard to boost the performance, scalability and reliability of the WPS software to give users the confidence they need to run heavy workloads whilst delivering maximum value from available computer power.

WPS version 2.5.1 offers additional flexibility with the release of two new data engines for accessing Greenplum and SAND databases. WPS now comes with eleven data engines and can access a huge range of commonly used and industry-standard file-formats and databases.

Support in WPS for the language of SAS continues to expand with more statistical procedures, data step functions, graphing controls and many other language items and options.

WPS version 2.5.1 is available as a free upgrade to all licensed users of WPS.

Summary of Main New Features:

- Supporting Even Larger Datasets

WPS is now able to process very large data sets by lifting completely the previous size limit of 2^31 observations.- Performance and Scalability Boosted

Performance and scalability improvements across the board combine to ensure even the most demanding large and concurrent workloads are processed efficiently and reliably.- More Language Support

WPS 2.5.1 continues the expansion of it’s language support with over 70 new language items, including new Procedures, Data Step functions and many other language items and options.- Statistical Analysis

The procedure support in WPS Statistics has been expanded to include PROC CLUSTER and PROC TREE.- Graphical Output

The graphical output from WPS Graphing has been expanded to accommodate more configurable graphics.- Hash Tables

Support is now provided for hash tables.- Greenplum®

A new WPS Engine for Greenplum provides dedicated support for accessing the Greenplum database.- SAND®

A new WPS Engine for SAND provides dedicated support for accessing the SAND database.- Oracle®

Bulk loading support now available in the WPS Engine for Oracle.- SQL Server®

To enhance existing SQL Server database access, a new SQLSERVR (please note spelling) facility in the ODBC engine.More Information:

Existing Users should visit www.teamwpc.co.uk/support/wps/release where you can download a readme file containing more information about all the new features and fixes in WPS 2.5.1.

New Users should visit www.teamwpc.co.uk/products/wps where you can explore in more detail all the features available in WPS or request a free evaluation.

and from http://www.teamwpc.co.uk/products/wps/data it seems they are going on the BIG DATA submarine as well-

WPS is now able to handle extremely large data sets now that the previous limit of 2^31 observations has been lifted.

I had recently asked some friends from my Twitter lists for their take on 2011, atleast 3 of them responded back with the answer, 1 said they were still on it, and 1 claimed a recent office event.

Anyways- I take note of the view of forecasting from

http://www.uiah.fi/projekti/metodi/190.htm

The most primitive method of forecasting is guessing. The result may be rated acceptable if the person making the guess is an expert in the matter.

Ajay- people will forecast in end 2010 and 2011. many of them will get forecasts wrong, some very wrong, but by Dec 2011 most of them would be writing forecasts on 2012. almost no one will get called on by irate users-readers- (hey you got 4 out of 7 wrong last years forecast!) just wont happen. people thrive on hope. so does marketing. in 2011- and before

and some forecasts from Tom Davenport’s The International Institute for Analytics (IIA) at

http://iianalytics.com/2010/12/2011-predictions-for-the-analytics-industry/

Regulatory and privacy constraints will continue to hamper growth of marketing analytics.

(I wonder how privacy and analytics can co exist in peace forever- one view is that model building can use anonymized data suppose your IP address was anonymized using a standard secret Coco-Cola formula- then whatever model does get built would not be of concern to you individually as your privacy is protected by the anonymization formula)

Anyway- back to the question I asked-

What are the top 5 events in your industry (events as in things that occured not conferences) and what are the top 3 trends in 2011.

I define my industry as being online technology writing- research (with a heavy skew on stat computing)

My top 5 events for 2010 were-

1) Consolidation- Big 5 software providers in BI and Analytics bought more, sued more, and consolidated more. The valuations rose. and rose. leading to even more smaller players entering. Thus consolidation proved an oxy moron as total number of influential AND disruptive players grew.

2) Cloudy Computing- Computing shifted from the desktop but to the mobile and more to the tablet than to the cloud. Ipad front end with Amazon Ec2 backend- yup it happened.

3) Open Source grew louder- yes it got more clients. and more revenue. did it get more market share. depends on if you define market share by revenues or by users.

Both Open Source and Closed Source had a good year- the pie grew faster and bigger so no one minded as long their slices grew bigger.

4) We didnt see that coming –

Technology continued to surprise with events (thats what we love! the surprises)

Revolution Analytics broke through R’s Big Data Barrier, Tableau Software created a big Buzz, Wikileaks and Chinese FireWalls gave technology an entire new dimension (though not universally popular one).

people fought wars on emails and servers and social media- unfortunately the ones fighting real wars in 2009 continued to fight them in 2010 too

5) Money-

SAP,SAS,IBM,Oracle,Google,Microsoft made more money than ever before. Only Facebook got a movie named on itself. Venture Capitalists pumped in money in promising startups- really as if in a hurry to park money before tax cuts expired in some countries.

2011 Top Three Forecasts

1) Surprises- Expect to get surprised atleast 10 % of the time in business events. As internet grows the communication cycle shortens, the hype cycle amplifies buzz-

more unstructured data is created (esp for marketing analytics) leading to enhanced volatility

2) Growth- Yes we predict technology will grow faster than the automobile industry. Game changers may happen in the form of Chrome OS- really its Linux guys-and customer adaptability to new USER INTERFACES. Design will matter much more in technology on your phone, on your desktop and on your internet. Packaging sells.

False Top Trend 3) I will write a book on business analytics in 2011. yes it is true and I am working with A publisher. No it is not really going to be a top 3 event for anyone except me,publisher and lucky guys who read it.

3) Creating technology and technically enabling creativity will converge at an accelerated rate. use of widgets, guis, snippets, ide will ensure creative left brains can code easier. and right brains can design faster and better due to a global supply chain of techie and artsy professionals.

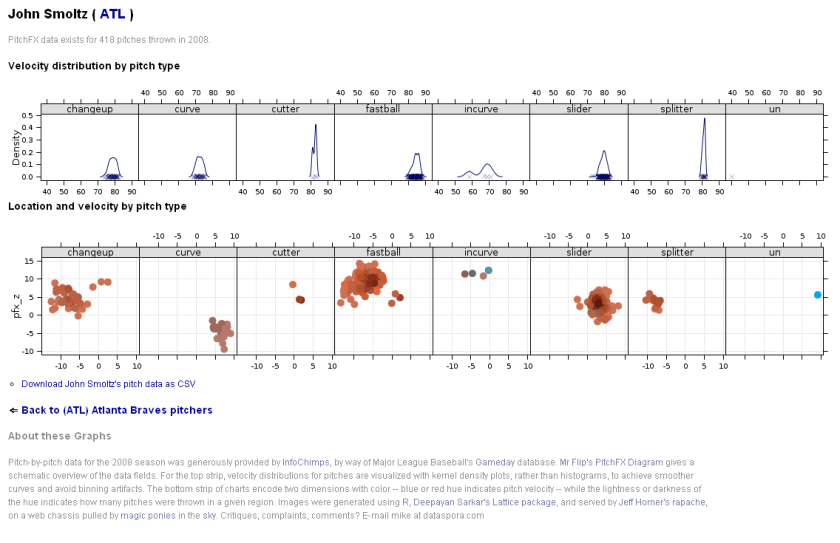

I am currently playing/ trying out RApache- one more excellent R product from Vanderbilt’s excellent Dept of Biostatistics and it’s prodigious coder Jeff Horner.

I really liked the virtual machine idea- you can download a virtual image of Rapache and play with it- .vmx is easy to create and great to share-

Basically using R Apache (with an EC2 on backend) can help you create customized dashboards, BI apps, etc all using R’s graphical and statistical capabilities.

What’s R Apache?

As per

http://biostat.mc.vanderbilt.edu/wiki/Main/RapacheWebServicesReport

Rapache embeds the R interpreter inside the Apache 2 web server. By doing this, Rapache realizes the full potential of R and its facilities over the web. R programmers configure appache by mapping Universal Resource Locaters (URL’s) to either R scripts or R functions. The R code relies on CGI variables to read a client request and R’s input/output facilities to write the response.

One advantage to Rapache’s architecture is robust multi-process management by Apache. In contrast to Rserve and RSOAP, Rapache is a pre-fork server utilizing HTTP as the communications protocol. Another advantage is a clear separation, a loose coupling, of R code from client code. With Rserve and RSOAP, the client must send data and R commands to be executed on the server. With Rapache the only client requirements are the ability to communicate via HTTP. Additionally, Rapache gains significant authentication, authorization, and encryption mechanism by virtue of being embedded in Apache.

Existing Demos of Architechture based on R Apache-

3. http://data.vanderbilt.edu/rapache/bbplot For baseball results – a demo of a query based web dashboard system- very good BI feel.

Whats coming next in R Apache?

You can download version 1.1.10 of rApache now. There

are only two significant changes and you don’t have to edit your

apache config or change any code (just recompile rApache and

reinstall):

1) Error reporting should be more informative. both when you

accidentally introduce errors in the Apache config, and when your code

introduces warnings and errors from web requests.

I’ve struggled with this one for awhile, not really knowing what

strategy would be best. Basically, rApache hooks into the R I/O layer

at such a low level that it’s hard to capture all warnings and errors

as they occur and introduce them to the user in a sane manner. In

prior releases, when ROutputErrors was in effect (either the apache

directive or the R function) one would typically see a bunch of grey

boxes with a red outline with a title of RApache Warning/Error!!!.

Unfortunately those grey boxes could contain empty lines, one line of

error, or a few that relate to the lines in previously displayed

boxes. Really a big uninformative mess.

The new approach is to print just one warning box with the title

“”Oops!!! <b>rApache</b> has something to tell you. View source and

read the HTML comments at the end.” and then as the title implies you

can read the HTML comment located at the end of the file… after the

closing html. That way, you’re actually reading how R would present

the warnings and errors to you as if you executed the code at the R

command prompt. And if you don’t use ROutputErrors, the warning/error

messages are printed in the Apache log file, just as they were before,

but nicer 😉

2) Code dispatching has changed so please let me know if I’ve

introduced any strange behavior.

This was necessary to enhance error reporting. Prior to this release,

rApache would use R’s C API exclusively to build up the call to your

code that is then passed to R’s evaluation engine. The advantage to

this approach is that it’s much more efficient as there is no parsing

involved, however all information about parse errors, files which

produced errors, etc. were lost. The new approach uses R’s built-in

parse function to build up the call and then passes it of to R. A

slight overhead, but it should be negligible. So, if you feel that

this approach is too slow OR I’ve introduced bugs or strange behavior,

please let me know.

FUTURE PLANS

I’m gaining more experience building Debian/Ubuntu packages each day,

so hopefully by some time in 2011 you can rely on binary releases for

these distributions and not install rApache from source! Fingers

crossed!

Development on the rApache 1.1 branch will be winding down (save bug

fix releases) as I transition to the 1.2 branch. This will involve

taking out a small chunk of code that defines the rApache development

environment (all the CGI variables and the functions such as

setHeader, setCookie, etc) and placing it in its own R package…

unnamed as of yet. This is to facilitate my development of the ralite

R package, a small single user cross-platform web server.

The goal for ralite is to speed up development of R web applications,

take out a bit of friction in the development process by not having to

run the full rApache server. Plus it would allow users to develop in

the rApache enronment while on windows and later deploy on more

capable server environments. The secondary goal for ralite is it’s use

in other web server environments (nginx and IIS come to mind) as a

persistent per-client process.

And finally, wiki.rapache.net will be the new www.rapache.net once I

translate the manual over… any day now.

From –http://biostat.mc.vanderbilt.edu/wiki/Main/JeffreyHorner

Not convinced ?- try the demos above.