From http://www.meetup.com/R-Users/calendar/14405407/

The September meeting is at the Oracle campus. (This is next door to the Oracle towers, so there is plenty of free parking.) The featured talk is from Alex Guazzelli (Vice President – Analytics, Zementis Inc.) who will talk about “Predictive analytics with R, PMML and ADAPA”.

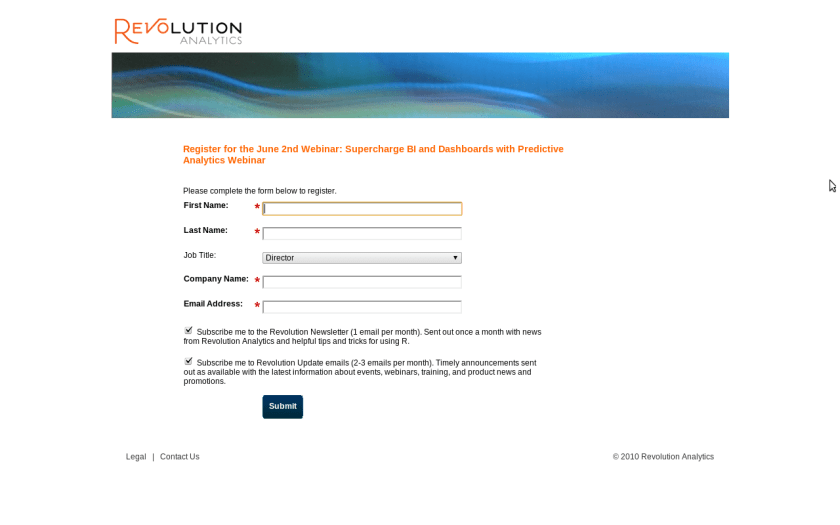

Agenda:

* 6:15 – 7:00 Networking and Pizza (with thanks to Revolution Analytics)

* 7:00 – 8:00 Talk: Predictive analytics with R, PMML and ADAPA

* 8:00 – 8:30 General discussion

Talk overview:

The rule in the past was that whenever a model was built in a particular development environment, it remained in that environment forever, unless it was manually recoded to work somewhere else. This rule has been shattered with the advent of PMML (Predictive Modeling Markup Language). By providing a uniform standard to represent predictive models, PMML allows for the exchange of predictive solutions between different applications and various vendors.

Once exported as PMML files, models are readily available for deployment into an execution engine for scoring or classification. ADAPA is one example of such an engine. It takes in models expressed in PMML and transforms them into web-services. Models can be executed either remotely by using web-services calls, or via a web console. Users can also use an Excel add-in to score data from inside Excel using models built in R.

R models have been exported into PMML and uploaded in ADAPA for many different purposes. Use cases where clients have used the flexibility of R to develop and the PMML standard combined with ADAPA to deploy range from financial applications (e.g., risk, compliance, fraud) to energy applications for the smart grid. The ability to easily transition solutions developed in R to the operational IT production environment helps eliminate the traditional limitations of R, e.g. performance for high volume or real-time transactional systems and memory constraints associated with large data sets.

Speaker Bio:

Dr. Alex Guazzelli has co-authored the first book on PMML, the Predictive Model Markup Language which is the de facto standard used to represent predictive models. The book, entitled PMML in Action: Unleashing the Power of Open Standards for Data Mining and Predictive Analytics, is available on Amazon.com. As the Vice President of Analytics at Zementis, Inc., Dr. Guazzelli is responsible for developing core technology and analytical solutions under ADAPA, a PMML-based predictive decisioning platform that combines predictive analytics and business rules. ADAPA is the first system of its kind to be offered as a service on the cloud.

Prior to joining Zementis, Dr. Guazzelli was involved in not only building but also deploying predictive solutions for large financial and telecommunication institutions around the globe. In academia, Dr. Guazzelli worked with data mining, neural networks, expert systems and brain theory. His work in brain theory and computational neuroscience has appeared in many peer reviewed publications. At Zementis, Dr. Guazzelli and his team have been involved in a myriad of modeling projects for financial, health-care, gaming, chemical, and manufacturing industries.

Dr. Guazzelli holds a Ph.D. in Computer Science from the University of Southern California and a M.S and B.S. in Computer Science from the Federal University of Rio Grande do Sul, Brazil.