Jim Goodnight – grand old man and Godfather of the Cosa Nostra of the BI/Database Analytics software industry said recently on open source in BI (btw R is generally termed in business analytics and NOT business intelligence software so these remarks were more apt to Pentaho and Jaspersoft )

Asked whether open source BI and data integration software from the likes of Jaspersoft, Pentaho and Talend is a growing threat, [Goodnight] said: “We haven’t noticed that a lot. Most of our companies need industrial strength software that has been tested, put through every possible scenario or failure to make sure everything works correctly.”

quotes from Jim Goodnight are courtesy Jason’s story here:

http://www.cbronline.com/news/sas-ceo-says-cep-open-source-and-cloud-bi-have-limited-appeal

and the Pentaho follow-up reaction is here

http://bi.cbronline.com/news/pentaho-fires-back-across-sas-bows-over-limited-open-source-appeal

While you can rage and screech- here is the reality in terms of market share-

From Merv Adrian-‘s excellent article on market shares in BI

The first, labeled BI Platforms, is drawn fromGartner Market Share Analysis: Business Intelligence, Analytics and Performance Management Software, Worldwide, 2009, published May 2010 , and Gartner Dataquest Market Share: Business Intelligence, Analytics and Performance Management Software, Worldwide, 2009.

and

and

so whats the performance of Talend, Pentaho and Jaspersoft

From http://www.dbms2.com/category/products-and-vendors/talend/

It seems that Talend’s revenue was somewhat shy of $10 million in 2008.

and Talend itself says

http://www.talend.com/press/Talend-Announces-Record-2009-and-Continues-Growth-in-the-New-Year.php

Additional 2009 highlights include:

- Achieved record revenue, more then doubling from 2008. The fourth quarter of 2009 was Talend’s tenth consecutive quarter of growth.

- Grew customer base by 140% to over 1,000 customers, up from 420 at the end of 2008. Of these new customers, over 50% are Fortune 1000 companies.

- Total downloads reached seven million, with over 300,000 users of the open source products.

- Talend doubled its staff, increasing to 200 global employees. Continuing this trend, Talend has already hired 15 people in 2010 to support its rapid growth.

now for Jaspersoft numbers

http://www.dbms2.com/2008/09/14/jaspersoft-numbers/

Highlights include:

- Revenue run rate in the double-digit millions.

- 40% sequential growth most recent quarter. (I didn’t ask whether there was any reason to suspect seasonality.)

- 130% annual revenue growth run rate.

- “Not quite” profitable.

- Several hundred commercial subscribers, at an average of $25K annually per, including >100 in Europe.

- 9,000 paying customers of some kind.

- 100,000+ total deployments, “very conservatively,” counting OEMs as one deployment each and not double-counting for OEMs’ customers. (Nick said Business Objects quotes 45,000 deployments by the same standards.)

- 70% of revenue from the mid-market, defined as $100 million – $1 billion revenue. 30% from bigger enterprises. (Hmm. That begs a couple of questions, such as where OEM revenue comes in, and whether <$100 million enterprises were truly a negligible part of revenue.)

and for Pentaho numbers-

http://www.dbms2.com/2009/01/27/introduction-to-pentaho/

and http://www.monash.com/uploads/Pentaho-January-2009.pdf

suggests there are far far away from the top 5-6 vendors in BI

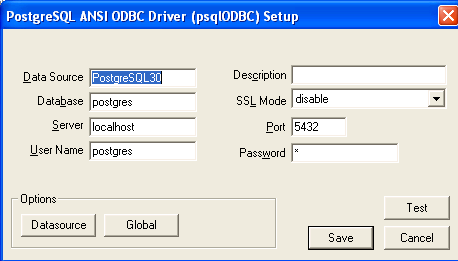

and a special mention for postgreSQL– which is a non Profit but is seriously denting Oracle/MySQL

http://www.postgresql.org/about/

| Limit | Value |

|---|---|

| Maximum Database Size | Unlimited |

| Maximum Table Size | 32 TB |

| Maximum Row Size | 1.6 TB |

| Maximum Field Size | 1 GB |

| Maximum Rows per Table | Unlimited |

| Maximum Columns per Table | 250 – 1600 depending on column types |

| Maximum Indexes per Table | Unlimited |

and leading vendor is EnterpriseDB which is again IBM-partnering as well as IBM funded

http://www.sramanamitra.com/2009/05/18/enterprise-db/

and

http://www.enterprisedb.com/company/news_events/press_releases/2010_21.do

suggest it is still in early stages.

————————————————————–

So what do we conclude-

1) There is a complete lack of transparency in open source BI market shares as almost all these companies are privately held and do not disclose revenues.

2) What may be a pure play open source company may actually be a company funded by a big BI vendor (like Revolution Analytics is funded among others by Intel-Microsoft) and EnterpriseDB has IBM as an investor.MySQL and Sun of course are bought by Oracle

The degree of control by proprietary vendors on open source vendors is still not disclosed- whether they are holding a stake for strategic reasons or otherwise.

3) None of the Open Source Vendors are even close to a 1 Billion dollar revenue number.

Jim Goodnight is pointing out market reality when he says he has not seen much impact (in terms of market share). As for the rest of his remarks, well he’s got a job to do as CEO and thats talk up his company and trash the competition- which he as been doing for 3 decades and unlikely to change now unless there is severe market share impact. Unless you expect him to notice companies less than 5% of his size in revenue.

Related Articles

http://www.cbronline.com/news/sas-ceo-says-cep-open-source-and-cloud-bi-have-limited-appeal

http://bi.cbronline.com/news/pentaho-fires-back-across-sas-bows-over-limited-open-source-appeal

- SAS vs Open Source (revolutionanalytics.com)

- Reducing the Cost of Business Intelligence with Open Source (itexpertvoice.com)

- Lunexa and Talend Partner to Drive Adoption of Open Source Data Management Solutions (eon.businesswire.com)

- Talend Expands Partnership with Netezza to Advance Enterprise-Scale Data Management Deployments (eon.businesswire.com)

- Open Source Business Intelligence: Pentaho and Jaspersoft (r-bloggers.com)

- SAS chief says global software sales up 5 pct (reuters.com)

- Business Analytics Leader SAS Joins White House Education Effort (eon.businesswire.com)

- New Report Details The Rise of Business Intelligence Software (ostatic.com)

- After Talend and ExoPlatform, Bonitasoft gets ready to seduce the US market with its open source BMP solution (eu.techcrunch.com)

- Talend and Cloudera Announce Technology Partnership to Simplify Processing of Large Scale Data (eon.businesswire.com)