a cool sounding software- yet again by the guys from California, this one enables to zip and unzip Big Data much much faster

http://news.ycombinator.com/item?id=2356735

and

https://code.google.com/p/snappy/

Snappy is a compression/decompression library. It does not aim for maximum compression, or compatibility with any other compression library; instead, it aims for very high speeds and reasonable compression. For instance, compared to the fastest mode of zlib, Snappy is an order of magnitude faster for most inputs, but the resulting compressed files are anywhere from 20% to 100% bigger. On a single core of a Core i7 processor in 64-bit mode, Snappy compresses at about 250 MB/sec or more and decompresses at about 500 MB/sec or more.

Snappy is widely used inside Google, in everything from BigTable and MapReduce to our internal RPC systems. (Snappy has previously been referred to as “Zippy” in some presentations and the likes.)

For more information, please see the README. Benchmarks against a few other compression libraries (zlib, LZO, LZF, FastLZ, and QuickLZ) are included in the source code distribution.

| Introduction |

| ============ |

| Snappy is a compression/decompression library. It does not aim for maximum |

| compression, or compatibility with any other compression library; instead, |

| it aims for very high speeds and reasonable compression. For instance, |

| compared to the fastest mode of zlib, Snappy is an order of magnitude faster |

| for most inputs, but the resulting compressed files are anywhere from 20% to |

| 100% bigger. (For more information, see “Performance”, below.) |

| Snappy has the following properties: |

| * Fast: Compression speeds at 250 MB/sec and beyond, with no assembler code. |

| See “Performance” below. |

| * Stable: Over the last few years, Snappy has compressed and decompressed |

| petabytes of data in Google’s production environment. The Snappy bitstream |

| format is stable and will not change between versions. |

| * Robust: The Snappy decompressor is designed not to crash in the face of |

| corrupted or malicious input. |

| * Free and open source software: Snappy is licensed under the Apache license, |

| version 2.0. For more information, see the included COPYING file. |

| Snappy has previously been called “Zippy” in some Google presentations |

| and the like. |

| Performance |

| =========== |

| Snappy is intended to be fast. On a single core of a Core i7 processor |

| in 64-bit mode, it compresses at about 250 MB/sec or more and decompresses at |

| about 500 MB/sec or more. (These numbers are for the slowest inputs in our |

| benchmark suite; others are much faster.) In our tests, Snappy usually |

| is faster than algorithms in the same class (e.g. LZO, LZF, FastLZ, QuickLZ, |

| etc.) while achieving comparable compression ratios. |

| Typical compression ratios (based on the benchmark suite) are about 1.5-1.7x |

| for plain text, about 2-4x for HTML, and of course 1.0x for JPEGs, PNGs and |

| other already-compressed data. Similar numbers for zlib in its fastest mode |

| are 2.6-2.8x, 3-7x and 1.0x, respectively. More sophisticated algorithms are |

| capable of achieving yet higher compression rates, although usually at the |

| expense of speed. Of course, compression ratio will vary significantly with |

| the input. |

| Although Snappy should be fairly portable, it is primarily optimized |

| for 64-bit x86-compatible processors, and may run slower in other environments. |

| In particular: |

| – Snappy uses 64-bit operations in several places to process more data at |

| once than would otherwise be possible. |

| – Snappy assumes unaligned 32- and 64-bit loads and stores are cheap. |

| On some platforms, these must be emulated with single-byte loads |

| and stores, which is much slower. |

| – Snappy assumes little-endian throughout, and needs to byte-swap data in |

| several places if running on a big-endian platform. |

| Experience has shown that even heavily tuned code can be improved. |

| Performance optimizations, whether for 64-bit x86 or other platforms, |

| are of course most welcome; see “Contact”, below. |

| Usage |

| ===== |

| Note that Snappy, both the implementation and the interface, |

| is written in C++. |

| To use Snappy from your own program, include the file “snappy.h” from |

| your calling file, and link against the compiled library. |

| There are many ways to call Snappy, but the simplest possible is |

| snappy::Compress(input, &output); |

| and similarly |

| snappy::Uncompress(input, &output); |

| where “input” and “output” are both instances of std::string. |

Related Articles

- Google releases snappy, the compression library used in Bigtable (code.google.com)

- Maximizing Search Engine Visitors The Correct Way (ronmedlin.com)

- MapReduce from the basics to the actually useful (in under 30 minutes) (cloudant.com)

2)

2)

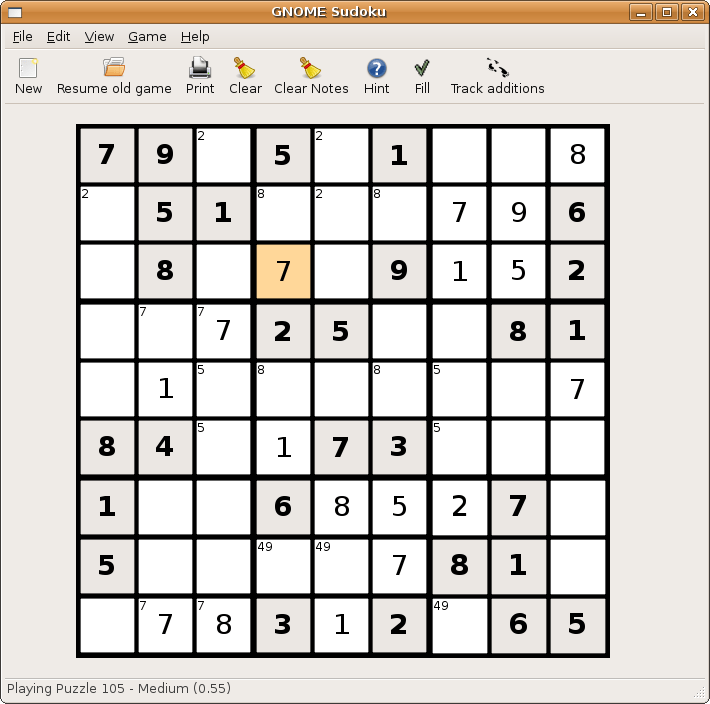

5) Pacman/Njam- Clone of the original classic game. Downloadable from

5) Pacman/Njam- Clone of the original classic game. Downloadable from  6)

6)

8) Card Games- KPatience has almost 14 card games including solitaire, and free cell.

8) Card Games- KPatience has almost 14 card games including solitaire, and free cell.  9) Sauerbraten -First person shooter with good network play, edit maps capabilities. You can read more here-

9) Sauerbraten -First person shooter with good network play, edit maps capabilities. You can read more here-  10) Tetris-KBlocks Tetris is the classic game. If you like classic slow games- Tetris is the best. and I like the toughest Tetris game -Bastet

10) Tetris-KBlocks Tetris is the classic game. If you like classic slow games- Tetris is the best. and I like the toughest Tetris game -Bastet  Even an xkcd toon for it

Even an xkcd toon for it