Here are some cool games I like to play on my Ubuntu 10.10 – I think they run on most other versions of Linux as well. 1) Open Arena– First person Shooter– This is like Quake Arena- very very nice graphics and good for playing for a couple of hours while taking a break. It is available here- http://openarena.ws/smfnews.php ideally if you have a bunch of gaming friends, playing on a local network or internet is quite mind blowing entertaining. And it’s free!  2) Armagetron– This is based on the TRON game of light cycles-It is available here at http://www.armagetronad.net/ or you can use Synaptic packages manager for all the games mentioned here

2) Armagetron– This is based on the TRON game of light cycles-It is available here at http://www.armagetronad.net/ or you can use Synaptic packages manager for all the games mentioned here

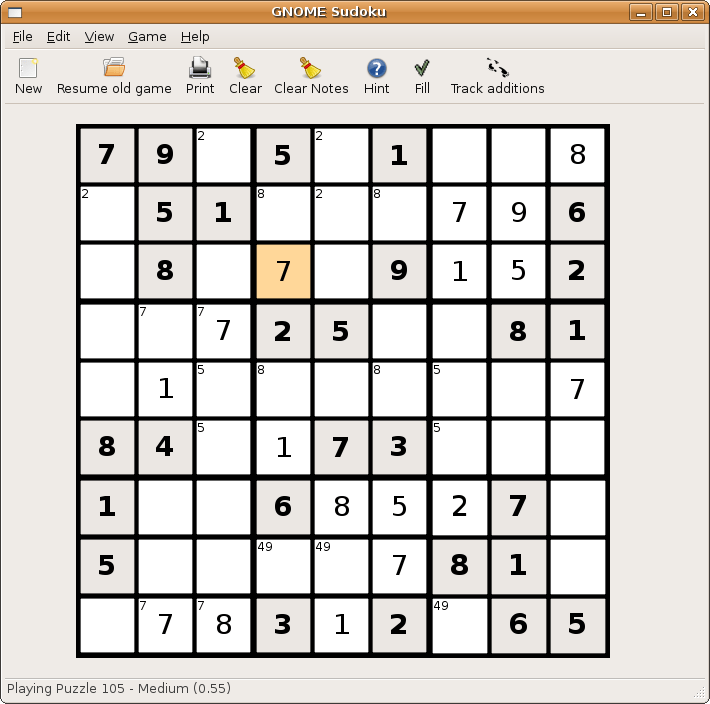

3) Sudoko–

If violence or cars is not your stuff and you like puzzles like Sudoko, well just install the application Sudoko from http://gnome-sudoku.sourceforge.net/ Also recommended for people of various ages as it has multiple levels.

4) Pinball

If you ever liked Pinball play the open source version from download at http://pinball.sourceforge.net/ Alternatively you can go to Ubuntu Software Centre>Games>Arcade>Emilio>Pinball and you can also build your own pinball if you like the game well enough.  5) Pacman/Njam- Clone of the original classic game. Downloadable from http://www.linuxcompatible.org/news/story/pacman_for_linux.html

5) Pacman/Njam- Clone of the original classic game. Downloadable from http://www.linuxcompatible.org/news/story/pacman_for_linux.html  6) Gweled– This is free clone version of Bejeweled. It now has a new website at http://gweled.org/

6) Gweled– This is free clone version of Bejeweled. It now has a new website at http://gweled.org/  http://linux.softpedia.com/progDownload/Gweled-Download-3449.html

http://linux.softpedia.com/progDownload/Gweled-Download-3449.html

Gweled is a GNOME version of a popular PalmOS/Windows/Java game called “Bejeweled” or “Diamond Mine”. The aim of the game is to make alignment of 3 or more gems, both vertically or horizontally by swapping adjacent gems. The game ends when there are no possible moves left. Here are some key features of “Gweled”: · exact same gameplay as the commercial versions · SVG original graphics

7) Hearts – For this card game classis you can use Ubuntu software to install the package or go to http://linuxappfinder.com/package/gnome-hearts  8) Card Games- KPatience has almost 14 card games including solitaire, and free cell.

8) Card Games- KPatience has almost 14 card games including solitaire, and free cell.  9) Sauerbraten -First person shooter with good network play, edit maps capabilities. You can read more here- http://sauerbraten.org/

9) Sauerbraten -First person shooter with good network play, edit maps capabilities. You can read more here- http://sauerbraten.org/  10) Tetris-KBlocks Tetris is the classic game. If you like classic slow games- Tetris is the best. and I like the toughest Tetris game -Bastet http://fph.altervista.org/prog/bastet.html

10) Tetris-KBlocks Tetris is the classic game. If you like classic slow games- Tetris is the best. and I like the toughest Tetris game -Bastet http://fph.altervista.org/prog/bastet.html  Even an xkcd toon for it

Even an xkcd toon for it

That’s all for holiday season folks, the top 10 lists is based on almost 3 decades of gaming experience, but beauty is the eye of the beholder- so happy gaming for free.

Related Articles

- Ubuntu 10.10 Alternatives (lockergnome.com)

- Maciej Danielski: Tried Ubuntu 10.10 for a week and now back on #! CrunchBang Linux (meanmachine.wordpress.com)

- 7 Predictions For Open Source in 2011 (pcworld.com)

- Bodhi Linux Get Software Page Goes Live (jeffhoogland.blogspot.com)

- Interview with Matt Asay of Canonical (interviews.slashdot.org)

- Macbuntu Makes your Linux Desktop Look Like Mac OS X [Downloads] (lifehacker.com)

- Fix VirtualBox’s Guest Additions in Ubuntu 10.10 [Linux Tip] (lifehacker.com)

- Linux Mint 10 Boasts New Menu And Theme (lockergnome.com)

- Ubuntu tablet rumored for early 2011 launch (go.theregister.com)

- Alien Arena 2011 Released (techie-buzz.com)

- This Is What $10,000 Worth of Top Tier Pinball Play Looks Like [Clips] (kotaku.com)

- 6 Fun Ways To Explore Ubuntu 10.10 [Linux] (makeuseof.com)

- 4 Fun Party Games Using Networked Computers (makeuseof.com)

The training dataset includes several thousand anonymized patients and will be made available, securely and in full, to any registered team for the purpose of developing effective screening algorithms.

The training dataset includes several thousand anonymized patients and will be made available, securely and in full, to any registered team for the purpose of developing effective screening algorithms.