Often I am asked by clients, friends and industry colleagues on the suitability or unsuitability of particular software for analytical needs. My answer is mostly-

It depends on-

1) Cost of Type 1 error in purchase decision versus Type 2 error in Purchase Decision. (forgive me if I mix up Type 1 with Type 2 error- I do have some weird childhood learning disabilities which crop up now and then)

Here I define Type 1 error as paying more for a software when there were equivalent functionalities available at lower price, or buying components you do need , like SPSS Trends (when only SPSS Base is required) or SAS ETS, when only SAS/Stat would do.

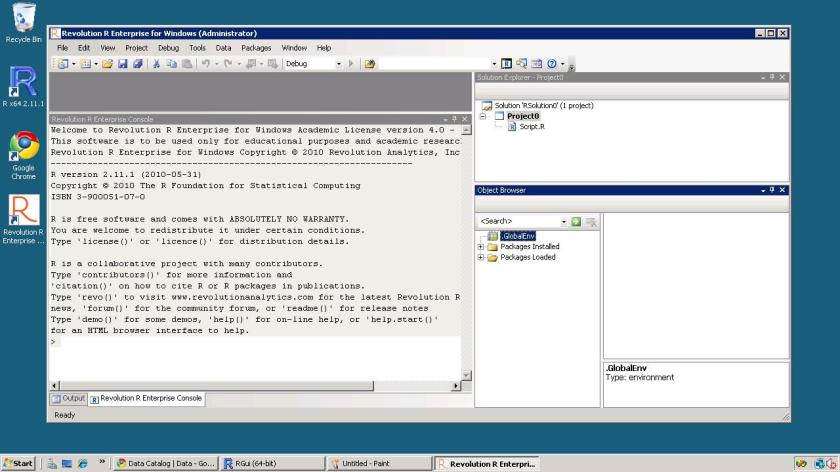

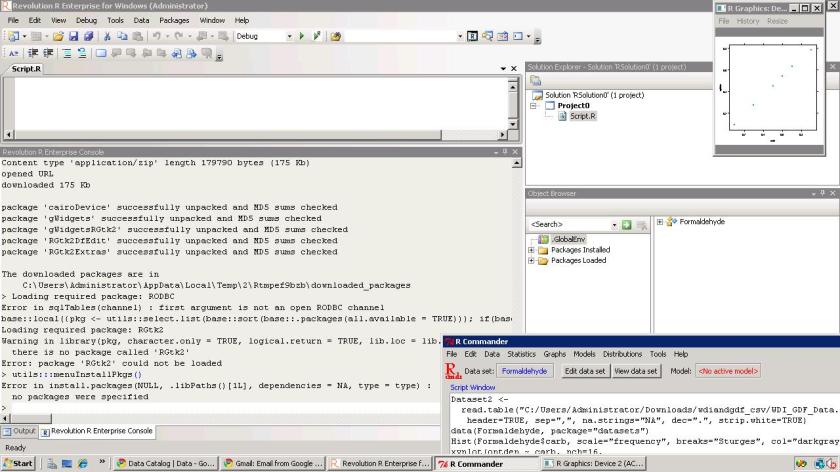

The first kind is of course due to the presence of free tools with GUI like R, R Commander and Deducer (Rattle does have a 500$ commercial version).

The emergence of software vendors like WPS (for SAS language aficionados) which offer similar functionality as Base SAS, as well as the increasing convergence of business analytics (read predictive analytics), business intelligence (read reporting) has led to somewhat brand clutter in which all softwares promise to do everything at all different prices- though they all have specific strengths and weakness. To add to this, there are comparatively fewer business analytics independent analysts than say independent business intelligence analysts.

2) Type 2 Error- In this case the opportunity cost of delayed projects, business models , or lower accuracy – consequences of buying a lower priced software which had lesser functionality than you required.

To compound the magnitude of error 2, you are probably in some kind of vendor lock-in, your software budget is over because of buying too much or inappropriate software and hardware, and still you could do with some added help in business analytics. The fear of making a business critical error is a substantial reason why open source software have to work harder at proving them competent. This is because writing great software is not enough, we need great marketing to sell it, and great customer support to sustain it.

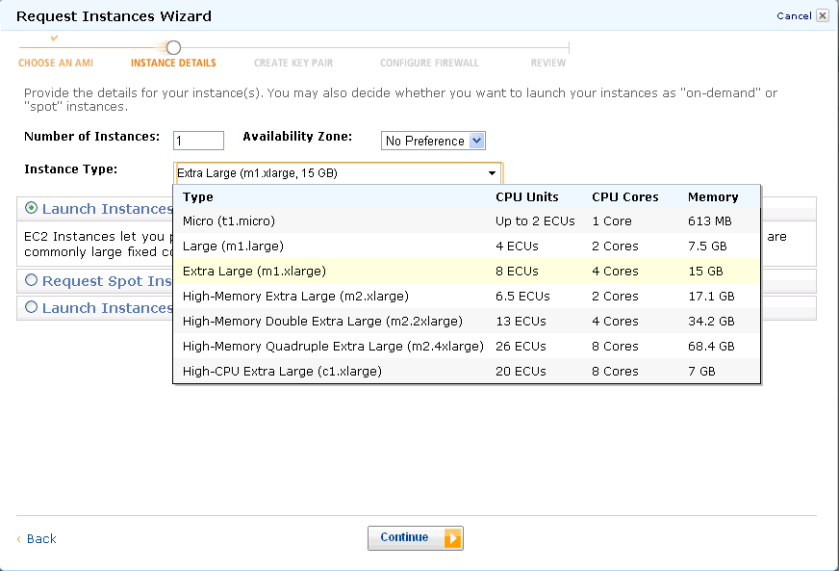

As Business Decisions are decisions made in the constraints of time, information and money- I will try to create a software purchase matrix based on my knowledge of known softwares (and unknown strengths and weakness), pricing (versus budgets), and ranges of data handling. I will add in basically an optimum approach based on known constraints, and add in flexibility for unknown operational constraints.

I will restrain this matrix to analytics software, though you could certainly extend it to other classes of enterprise software including big data databases, infrastructure and computing.

Noted Assumptions- 1) I am vendor neutral and do not suffer from subjective bias or affection for particular software (based on conferences, books, relationships,consulting etc)

2) All software have bugs so all need customer support.

3) All software have particular advantages , strengths and weakness in terms of functionality.

4) Cost includes total cost of ownership and opportunity cost of business analytics enabled decision.

5) All software marketing people will praise their own software- sometimes over-selling and mis-selling product bundles.

Software compared are SPSS, KXEN, R,SAS, WPS, Revolution R, SQL Server, and various flavors and sub components within this. Optimized approach will include parallel programming, cloud computing, hardware costs, and dependent software costs.

To be continued-

Related Articles

- New Deal in Statistical Training (r-bloggers.com)

- StatFilter: the time vs. money test (ask.metafilter.com)

- Netezza Buy Further Defines IBM’s Analytics Bent (pcworld.com)

- $1.4Bn Multi-Media Corporation Boosts Revenues with KXEN Analytics (eon.businesswire.com)

- Enhanced SAS IT Intelligence Software Includes Cloud, Virtual Servers (eon.businesswire.com)

- Interview Dean Abbott Abbott Analytics (r-bloggers.com)

- SAS brings predictive analytics to business users (infoworld.com)

- Netezza buy further defines IBM’s analytics bent (infoworld.com)

- Business analytics market to see 7% CAGR over 2009-14 (newstatesman.com)

- SAS Rolls Out Predictive Analytics for Business Users (nytimes.com)

- Doughnuts and Pizza Slices: Analyzing Consolidation and Competition Among Software Vendors (customerthink.com)

- NSF Wants To Know How Much Software Really Costs (developers.slashdot.org)

- What License Management Can Do for Your IT Shop (itexpertvoice.com)

- PASW v. 19 (SPSS) Trial Download (psipsychologytutor.org)

- SPSS Co-Founder “Tex” Hull Joins REvolution Computing (eon.businesswire.com)

- Global Banks Turn to IBM SPSS Predictive Analytics to Improve Customer Relationships (eon.businesswire.com)

- Selling the intangibles beyond the demand is the real challenge (leadsexplorer.com)