I sometimes get a chat message on Twitter/ Facebook asking for help on some specific data issue. More often than not it is something like – How do I get started in BI/BA /Data stuff. So here is a list of certifications which I think are quite nice as beginning points or even CV multipliers.

[tweetmeme=”Decisionstats”]

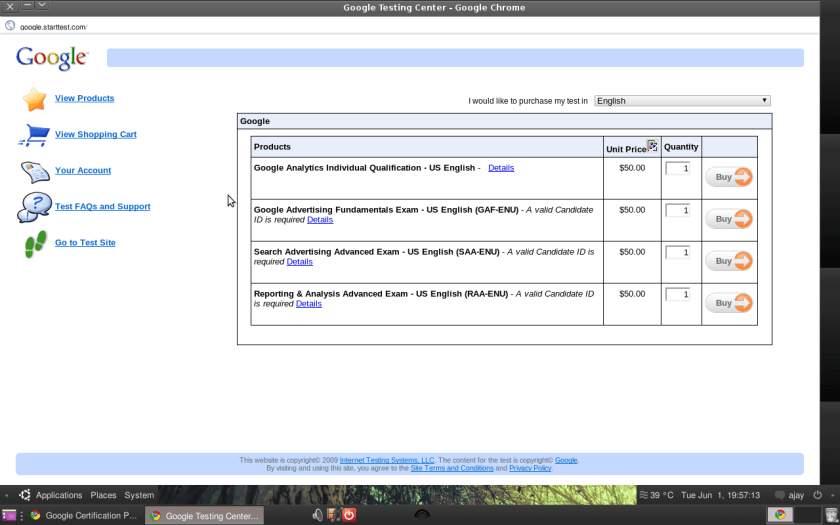

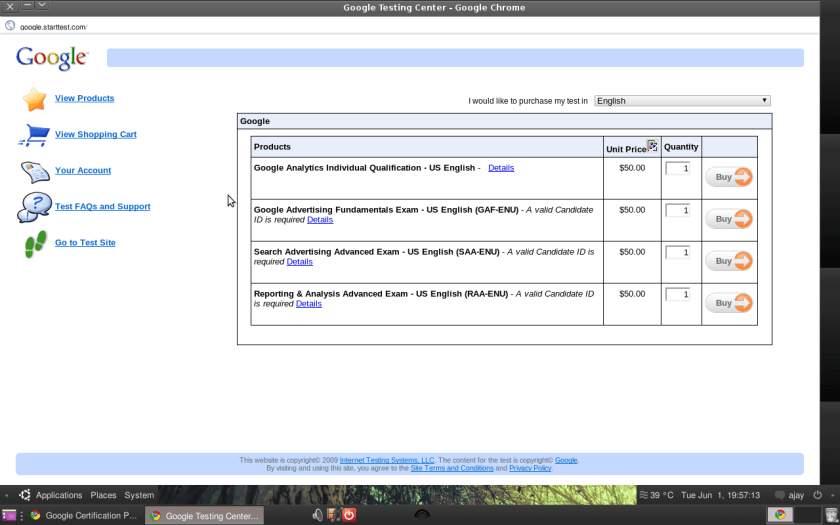

1) Google’s Certifications

http://www.google.com/intl/en/adwords/professionals/

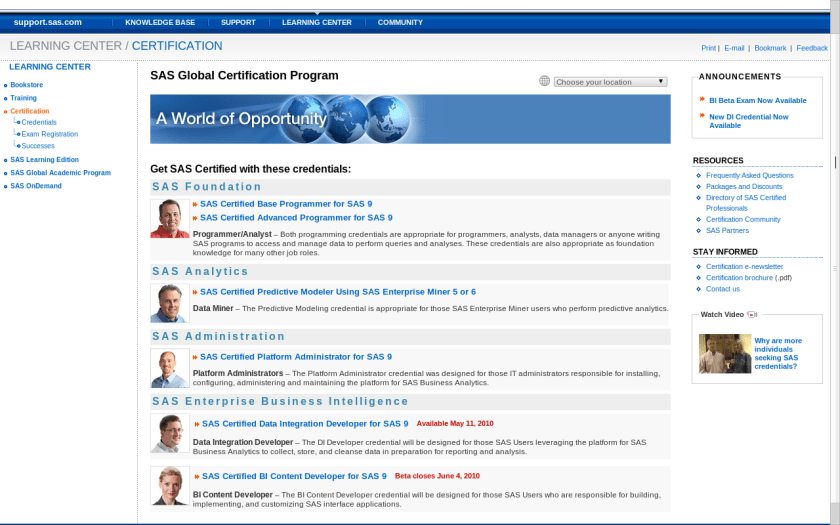

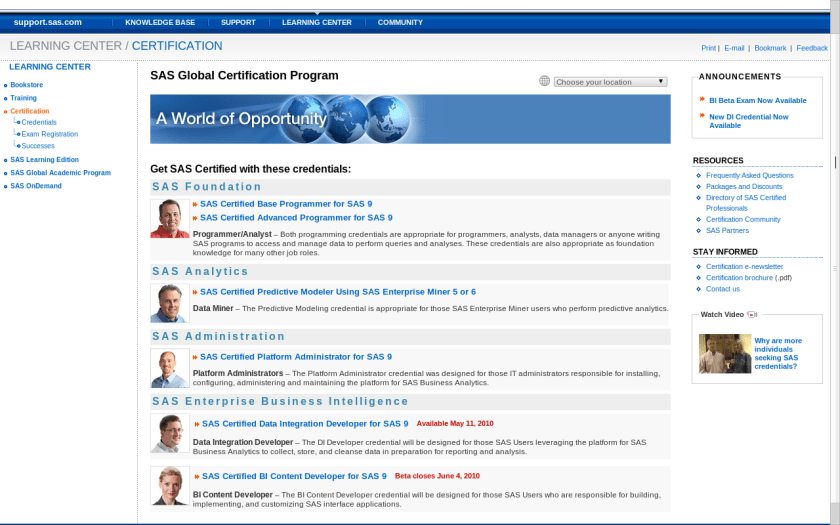

2) SAS Certifications

Quite well established and easily one of the best structured certification programs in the industry.

http://support.sas.com/certify/index.html

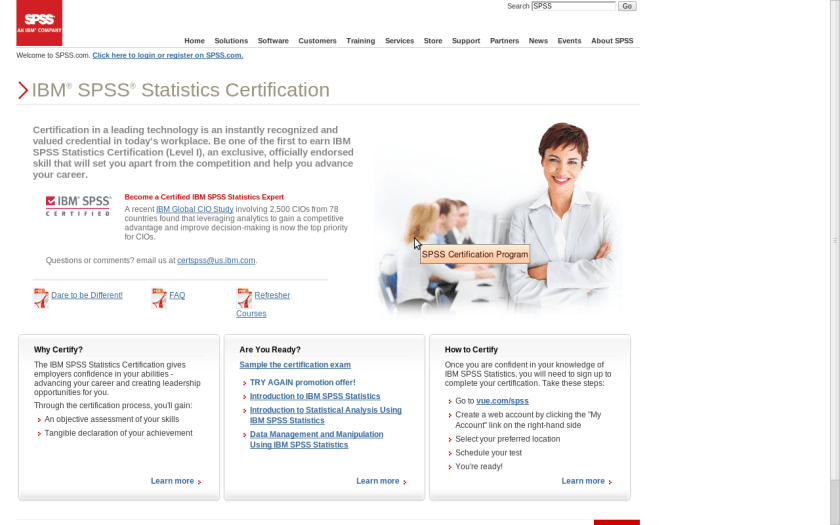

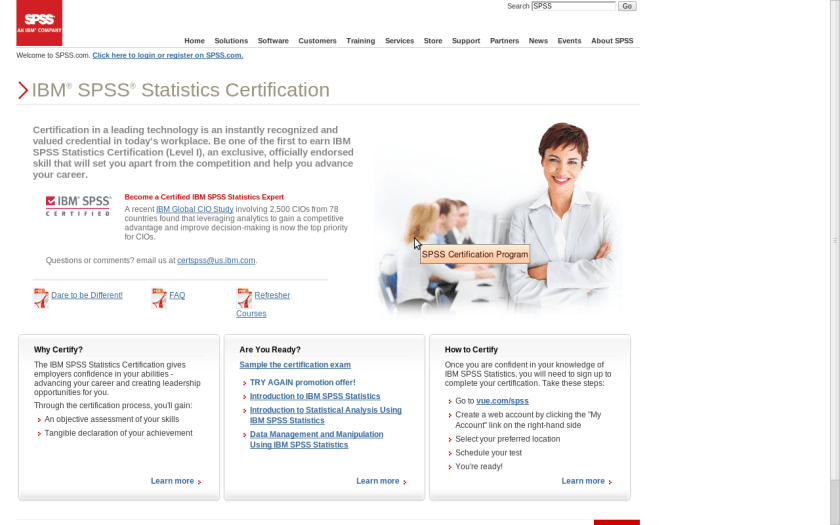

3) SPSS

The SPSS certification began last year and it helps provide a valuable skill set for both your practice as well as your resume. Also useful to have a second skill set apart from SAS in terms of statistical software.

http://www.spss.com/certification/

At this point I would like you to pause and think if the above certifications are useful or cost effective for you as they are broadly general qualifications in statistical platforms as well as in applying them for the web analytics ( a key area for business analytics).

For more specialized certifications here are some more-

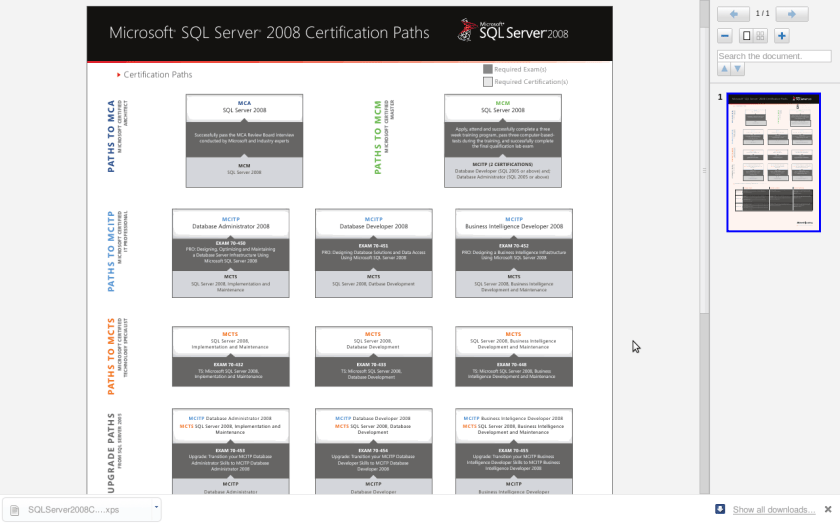

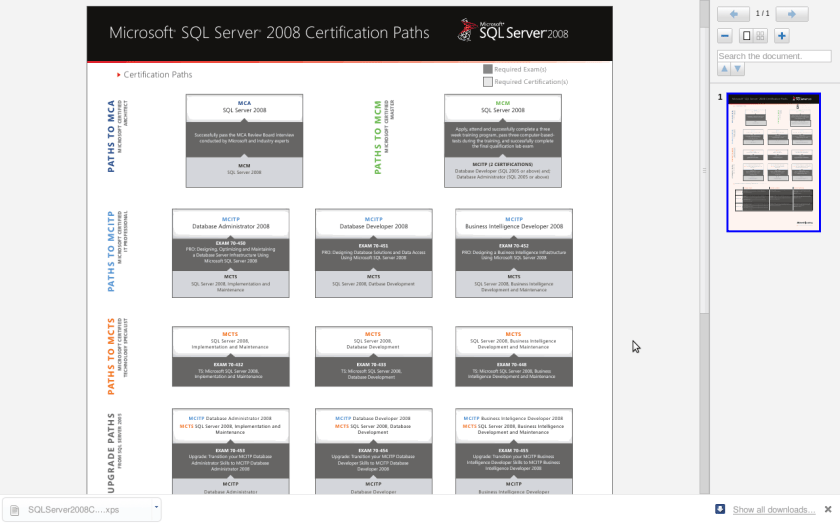

1) Microsoft SQL Server

http://www.microsoft.com/learning/en/us/certification/cert-sql-server.aspx

2) TDWI Certification

http://tdwi.org/pages/certification/index.aspx

3) IBM

Not sure how updated these are so caveat emptor!

http://www.redbooks.ibm.com/abstracts/sg245747.html

If you are knowledgeable about IBM’s Business Intelligence solutions and the fundamental concepts of DB2 Universal Database, and you are capable of performing the intermediate and advanced skills required to design, develop, and support Business Intelligence applications

Also IBM Cognos Certifications

http://www-01.ibm.com/software/data/education/cognos-cert.html

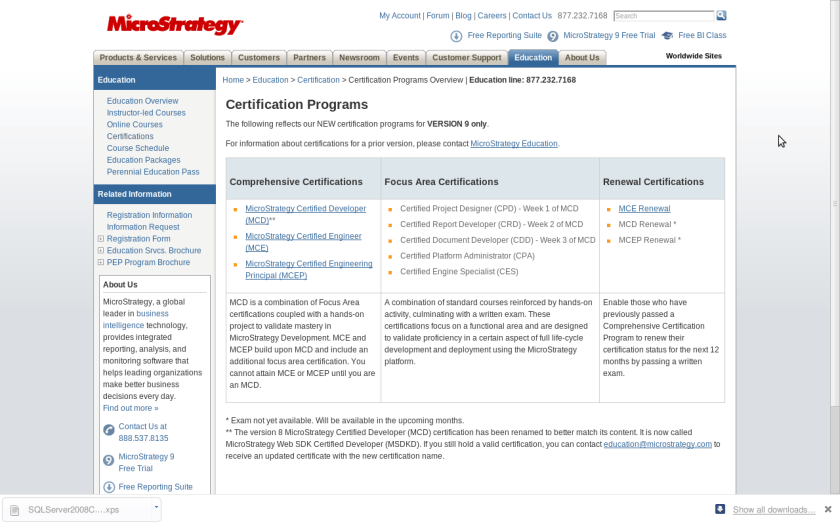

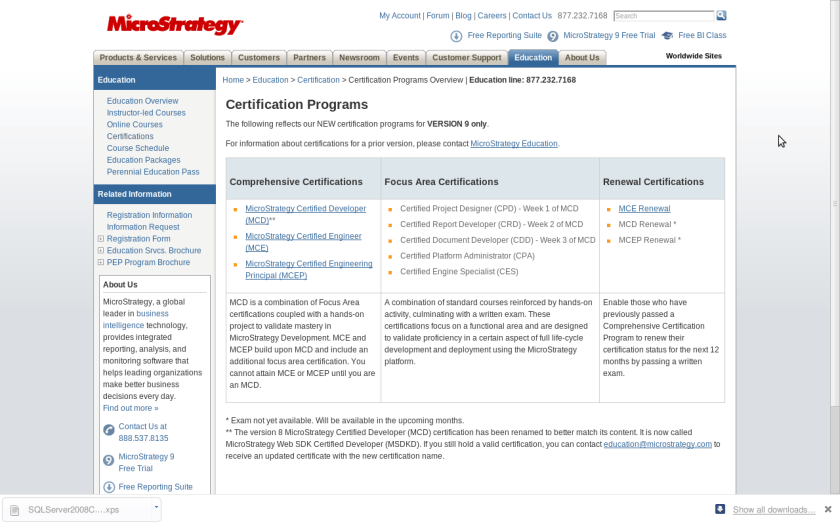

4) MicroStrategy

http://www.microstrategy.com/education/Certification/

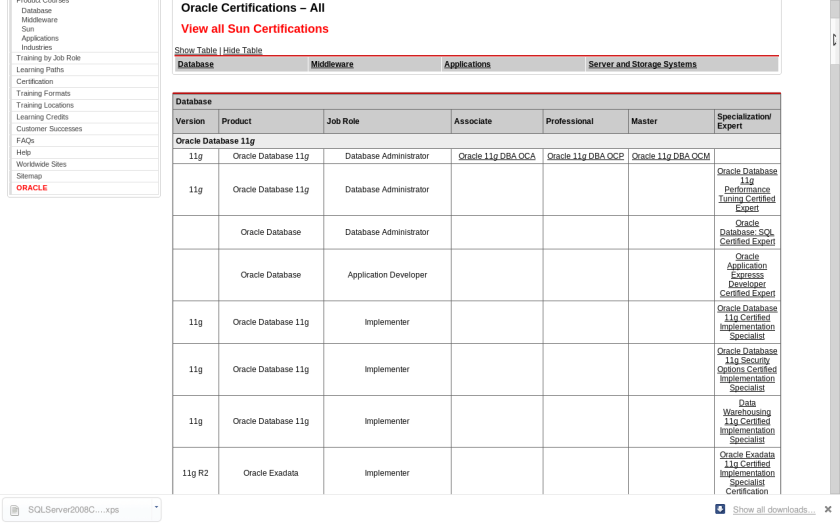

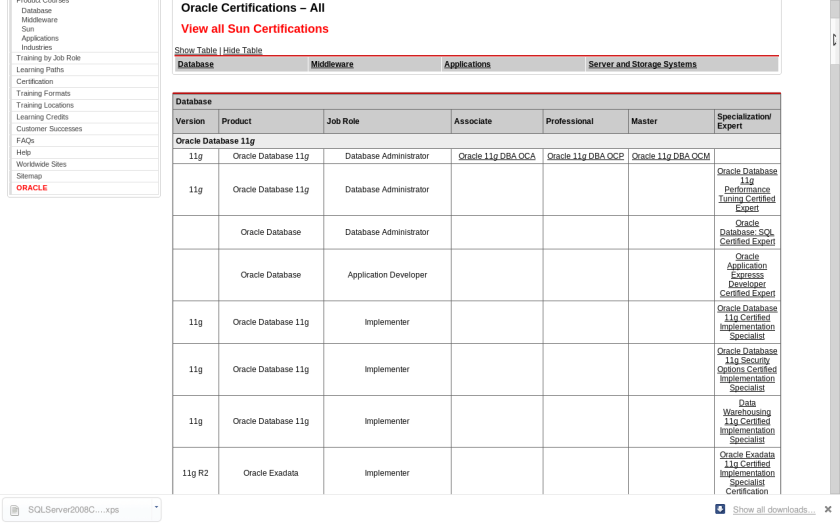

5) Oracle

Included the all new Sun Certifications as well.

http://certification.oracle.com/

and http://blogs.oracle.com/certification/

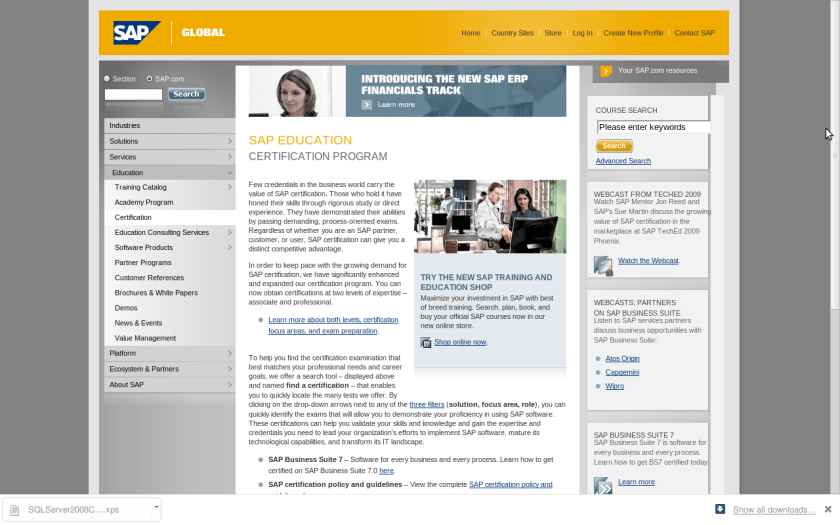

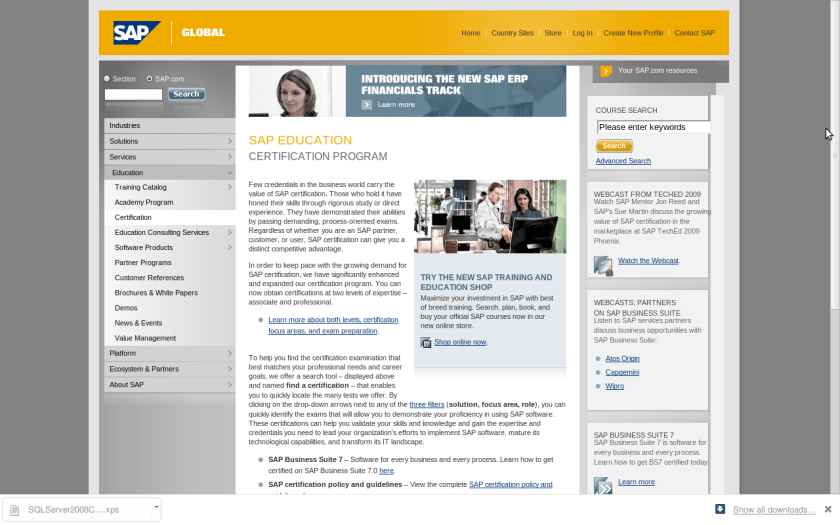

6) SAP Certifications

http://www.sap.com/services/education/certification/index.epx

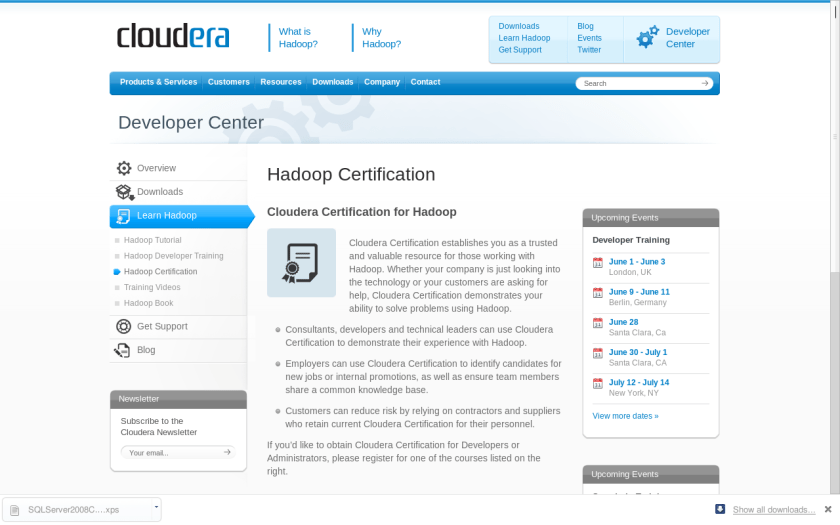

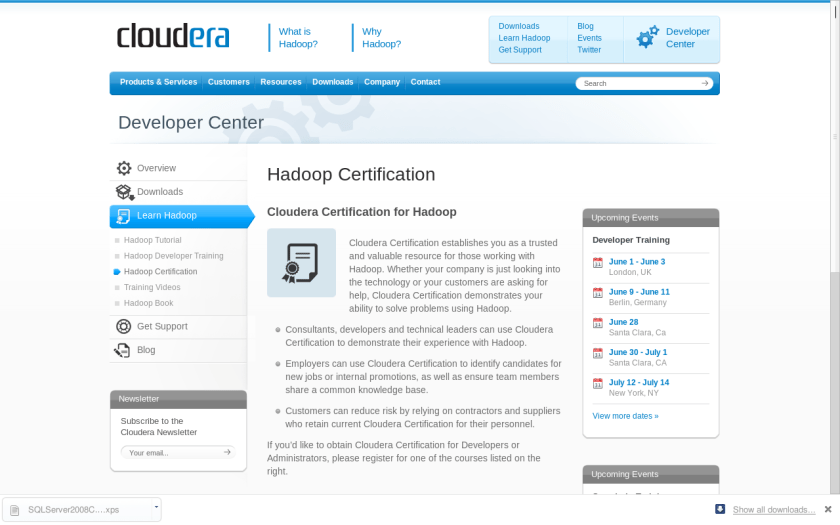

7) Cloudera’s Hadoop Certification

http://www.cloudera.com/developers/learn-hadoop/hadoop-certification/

These are some Business Intelligence and Business Analytics related certifications that I assembled in a list. Many other programs were either too software development specific or did not have a certification for general usage (like many R trainings or company tool specific trainings). Please feel free to add in any suggestions.

35.965000

-83.920000