This is a featured post by our sponsors

Using ggplot in Python #python #dataviz

Based on the open source project at http://ggplot.yhathq.com/ here is small training ppt created by one of our wonderful summer interns Sarah

Hat Tip to http://www.amazon.com/Grammar-Graphics-Statistics-Computing/dp/0387245448

Leland Wilkinson the inventor of Grammar of Graphics now works for Tableau Software

Get you app on Droid

1 Download and Install IntelliJ IDEA

https://www.jetbrains.com/idea/help/basics-and-installation.html#d1847332e131

2 To update which version of Java you want

$ sudo update-alternatives --config java

3 Download and Install Android Studio

http://developer.android.com/sdk/installing/index.html?pkg=studio 4 Learn about basic app building from MIT App Builder ( its a GUI so relax) http://ai2.appinventor.mit.edu/

5 Give up building yourself and post for a developer for your Android app at http://www.appfutura.com/

Sources-

http://askubuntu.com/questions/64329/how-to-replace-openjdk-6-with-openjdk-7

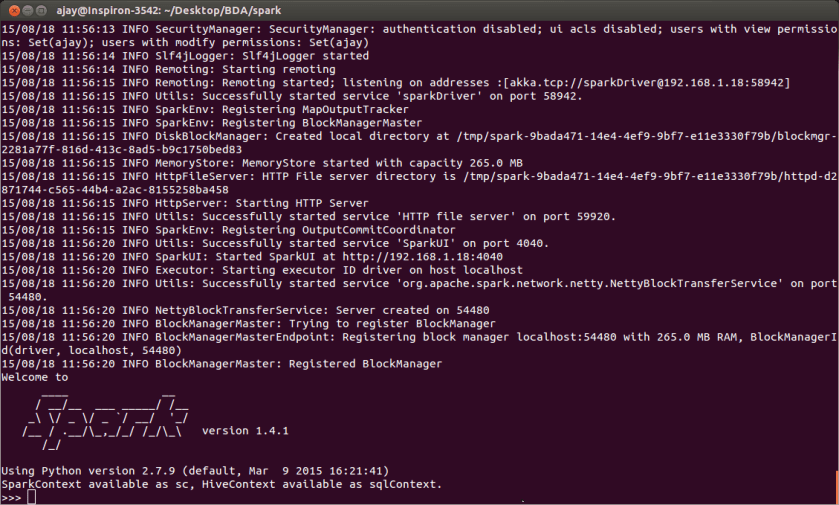

Installing and Using Spark easily with Python or R on Ubuntu #python #rstats

- Download spark from https://spark.apache.org/downloads.html (say to home/ajay/Desktop/BDA )

- Change to the directory from terminal cd /home/ajay/Desktop/BDA

- Unzip the file

tar -xvf spark-1.4.1-bin-hadoop2.6.tgz

- Change to the directory created ( say you unizpped spark file above and renamed it spark) ajay@Inspiron-3542:~/Desktop/BDA$ cd spark

- Run the command ./bin/pyspark

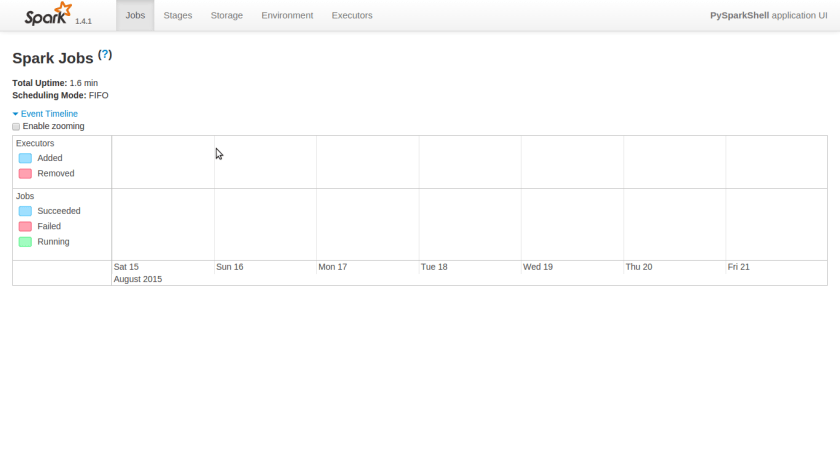

- To look at local jobs see http://192.168.1.18:4040/jobs/ (or based on what you get from your terminal after running command in step 4)

to do this with R just use .bin/sparkR

Sources- http://stackoverflow.com/questions/30483409/installing-apache-spark-on-ubuntu-14-04

Traps to avoid if you are a grey hat hacker

- Bait and Switch – used to plant embedded malware or logging systems https://en.wikipedia.org/wiki/Bait-and-switch the action (generally illegal) of advertising goods which are an apparent bargain, with the intention of substituting inferior or more expensive goods. It can be avoided by periodically changing your hardware and software with a reliance for open source and open market and of course by avoiding things that are too good to be true.

- The Honey Trap –https://en.wikipedia.org/wiki/Honey_trapping

a stratagem in which an attractive person entices another person into revealing information or doing something unwise. This one got Julian Assange

- The Honey Pot –https://en.wikipedia.org/wiki/Honeypot_(computing) a honeypot is a trap set to detect, deflect, or, in some manner, counteract attempts at unauthorized use of information systems. Generally, a honeypot consists of a computer, data, or a network site that appears to be part of a network, but is actually isolated and monitored, and which seems to contain information or a resource of value to attackers. This is similar to the police baiting a criminal and then conducting undercover surveillance. This one got Sabu.

- The Tax Trap- This one got Al Capone. Since there is no evidence against your cyber activities they put you in tax court based on the mismatch of your income and expenditure. It can be avoided by creating appropriate legal mechanisms including corporations.

- The Informer Trap– God can protect you against your enemies but not your friends. This can be avoided by delineating the personal private and professional life of your activities in different compartments, hardware and virtual machines including your own personality and brain. Reveal you true identity to boast and you will end up a Reservoir Dog

Is R going to be better than Python for Big Data Analytics and Data Science? #rstats #python

My last articles seems to have touched a nerve or two judging by the 2000 views I got in a single day on a Sunday ( and India’s national Independence Day / and V-J Day). Here I am simply reproducing the unedited and very interesting comments I got with an interesting R package.

On Google Plus, there is a vibrant community for R and Statistics. Yes Google plus exists still 😉 The following excellent comment makes you think.

This is pretty much a ho-hum topic with me. I don’t find this article very convincing. If you like Python, fine! Use Python. The problem I have with Python is that it is an interpreted language. Anything written in pure Python is going to take a long time to run on a big data set. Sure, there are Python packages for data analysis that run quickly, but you either have to depend on what someone else provides or develop your own package in compiled code.

I’ve found most software apps written specifically for “big data” to be very limited: a lot of them begin and end at N/N (pretty old hat now and inferior to a number of other methods for many analyses). If you can’t look under the hood and see what goes on in an analysis package, well, then good luck to you if you to use it, but don’t expect me to.

So far I’ve found that R works well for the large data sets I work with. (I’ll leave aside the issue of graphics for now; I have yet to see anything else that can hold a candle to R in that regard.) If the base packages that come with R can’t do a particular task I’ll first search among the over 5,000 packages currently available on CRAN. If that doesn’t work I’ll send a request to the R help list server. If that doesn’t work I’ll write my own routine in C or C# (I prefer the latter). BTW, if you are in the data analysis game you need to know enough to be able to do your own numerical analysis programming, say at the level of Numerical Recipes. Otherwise you are going to be overly dependent on someone else to provide software for you.

I’m not writing this to persuade anyone to pick one over the other. It’s just that there are a lot of possible choices out there — it’s not just R vs Python. And I’m just tired of these endless debates that go nowhere. As we say in the software engineering world: don’t try to convince the other person that your text editor/IDE/programming language is better than theirs.

download spark and build it as follows

cd <spark root>

build/mvn -Pyarn -Phadoop-2.4 -Dhadoop.version=2.4.0 -DskipTests -Phive -Phive-thriftserver clean package

Then start the thift service.

sbin/start-thriftserver.sh install.packages(c("RJDBC", "dplyr", "DBI", "devtools"))

devtools::install_github("hadley/purrr")

Indirectly RJDBC needs rJava. Make sure that you have rJava working with:

library(rJava)

.jinit()

install.packages("devtools") library(devtools)

install_url(

"https://github.com/RevolutionAnalytics/dplyr-spark/releases/download/0.2.2/dplyr.spark_0.2.2.tar.gz")library(dplyr)

library(dplyr.spark)

spark.src = src_SparkSQL(“localhost“, “10000“)

Is Python going to be better than R for Big Data Analytics and Data Science? #rstats #python

Uptil now the R ecosystem of package developers has mostly shrugged away the Big Data question. In a fascinating insight Hadley Wickham said this in a recent interview- shockingly it mimicks the FUD you know who has been accused of ( source

https://peadarcoyle.wordpress.com/2015/08/02/interview-with-a-data-scientist-hadley-wickham/

I think there are two particularly important transition points:

* From in-memory to disk. If your data fits in memory, it’s small data. And these days you can get 1 TB of ram, so even small data is big!

* From one computer to many computers.

R is a fantastic environment for the rapid exploration of in-memory data, but there’s no elegant way to scale it to much larger datasets. Hadoop works well when you have thousands of computers, but is incredible slow on just one machine. Fortunately, I don’t think one system needs to solve all big data problems.

To me there are three main classes of problem:

1. Big data problems that are actually small data problems, once you have the right subset/sample/summary.

2. Big data problems that are actually lots and lots of small data problems

3. Finally, there are irretrievably big problems where you do need all the data, perhaps because you fitting a complex model. An example of this type of problem is recommender systems

Ajay- One of the reasons of non development of R Big Data packages is- it takes money. The private sector in R ecosystem is a duopoly ( Revolution Analytics ( acquired by Microsoft) and RStudio (created by Microsoft Alum JJ Allaire). Since RStudio actively tries as a company to NOT step into areas Revolution Analytics works in- it has not ventured into Big Data in my opinion for strategic reasons.

Revolution Analytics project on RHadoop is actually just one consultant working on it here https://github.com/RevolutionAnalytics/RHadoop and it has not been updated since six months

We interviewed the creator of R Hadoop here https://decisionstats.com/2014/07/10/interview-antonio-piccolboni-big-data-analytics-rhadoop-rstats/

However Python developers have been trying to actually develop systems for Big Data actively. The Hadoop ecosystem and the Python ecosystem are much more FOSS friendly even in enterprise solutions.

This is where Python is innovating over R in Big Data-

http://blaze.pydata.org/en/latest/

-

Blaze: Translates NumPy/Pandas-like syntax to systems like databases.

Blaze presents a pleasant and familiar interface to us regardless of what computational solution or database we use. It mediates our interaction with files, data structures, and databases, optimizing and translating our query as appropriate to provide a smooth and interactive session.

-

Odo: Migrates data between formats.

Odo moves data between formats (CSV, JSON, databases) and locations (local, remote, HDFS) efficiently and robustly with a dead-simple interface by leveraging a sophisticated and extensible network of conversions. http://odo.pydata.org/en/latest/perf.html

odotakes two arguments, a target and a source for a data transfer.>>> from odo import odo >>> odo(source, target) # load source into target

-

Dask.array: Multi-core / on-disk NumPy arrays

Dask.arrays provide blocked algorithms on top of NumPy to handle larger-than-memory arrays and to leverage multiple cores. They are a drop-in replacement for a commonly used subset of NumPy algorithms.

-

DyND: In-memory dynamic arrays

DyND is a dynamic ND-array library like NumPy. It supports variable length strings, ragged arrays, and GPUs. It is a standalone C++ codebase with Python bindings. Generally it is more extensible than NumPy but also less mature. https://github.com/libdynd/libdynd

The core DyND developer team consists of Mark Wiebe and Irwin Zaid. Much of the funding that made this project possible came through Continuum Analytics and DARPA-BAA-12-38, part of XDATA.

LibDyND, a component of the Blaze project, is a C++ library for dynamic, multidimensional arrays. It is inspired by NumPy, the Python array programming library at the core of the scientific Python stack, but tries to address a number of obstacles encountered by some of its users. Examples of this are support for variable-sized string and ragged array types. The library is in a preview development state, and can be thought of as a sandbox where features are being tried and tweaked to gain experience with them.

C++ is a first-class target of the library, the intent is that all its features should be easily usable in the language. This has many benefits, such as that development within LibDyND using its own components is more natural than in a library designed primarily for embedding in another language.

This library is being actively developed together with its Python bindings,

http://dask.pydata.org/en/latest/

On a single machine dask increases the scale of comfortable data from fits-in-memory to fits-on-diskby intelligently streaming data from disk and by leveraging all the cores of a modern CPU.

Users interact with dask either by making graphs directly or through the dask collections which provide larger-than-memory counterparts to existing popular libraries:

- dask.array = numpy + threading

- dask.bag = map, filter, toolz + multiprocessing

- dask.dataframe = pandas + threading

Dask primarily targets parallel computations that run on a single machine. It integrates nicely with the existing PyData ecosystem and is trivial to setup and use:

conda install dask

or

pip install dask

https://github.com/cloudera/ibis

When open source fights- closed source wins. When the Jedi fight the Sith Lords will win

So will R people rise to the Big Data challenge or will they bury their heads in sands like an ostrich or a kiwi. Will Python people learn from R design philosophies and try and incorporate more of it without redesigning the wheel

Converting code from one language to another automatically?

How I wish there was some kind of automated conversion tool – that would convert a CRAN R package into a standard Python package which is pip installable

Machine learning for more machine learning anyone?

The lack of activity on rmr2 reflects maturity of the package and a shift away from Hadoop mapreduce toward spark. Please check the dplyr.spark package on github. It’s the easiest way to run spark bar none, including python, in its author very biased opinion. Example: find the best and worst flight by arrival delay on each day:

group_by(flights, year, month, day) %>%

select(flight, arr_delay) %>%

filter(arr_delay == min(arr_delay) || arr_delay == max(arr_delay))

Runs on spark, scales to whatever your cluster can store. Please show me the equivalent in any other language, python included. I am waiting.