I came across this nice post from someone who is both knowledgeable and experienced in data. I mean I totally agree that data visualization , user interfaces and unstructured data mining are the trends of the future.

What caught my attention were the words from http://www.thejuliagroup.com/blog/?p=433

However, for me personally and for most users, both individual and organizational, the much greater cost of software is the time it takes to install it, maintain it, learn it and document it. On that, R is an epic fail. It does NOT fit with the way the vast majority of people in the world use computers. The vast majority of people are NOT programmers. They are used to looking at things and clicking on things.

Let me analyze this scientifically and dispassionately

R Documentation

I believe that the SAS Online Doc and the SPSS Documentation are both good examples of structured documentation. I do belive that despite the many corporate R products floating- the quality of R documentation is both very extensive and perhaps too big to be put in a neat document something like the ” The Little R Book” or “R Online Doc” would really help.

Entering ? or ?? to search for documentation seems like too difficult work and complex for corporate users it seems. However the documentation for R is not really enterprise software quality is a valid enough point.

Maintaining R

It takes a single line of code or even a single click to update and maintain R.

Apparently the author of the fore mentioned post that existing corporate users are too STUPID OR LAZY to do this.

I like to think most corporate users of statistical software are actually way smarter ( One Hint : They earn money doing that stuff)

Installing R

Anyone who mentions installation costs of software as a reason for enhanced software costs and then mentions R is either biased against R or has not worked with R. Or Both

Learn R

I think anyone cannot learn all R packages just as you cannot learn all the modules of SAS ( like ETS, Stat, etc etc)

R does have more time to learn than Base SAS and this is a valid enough point.

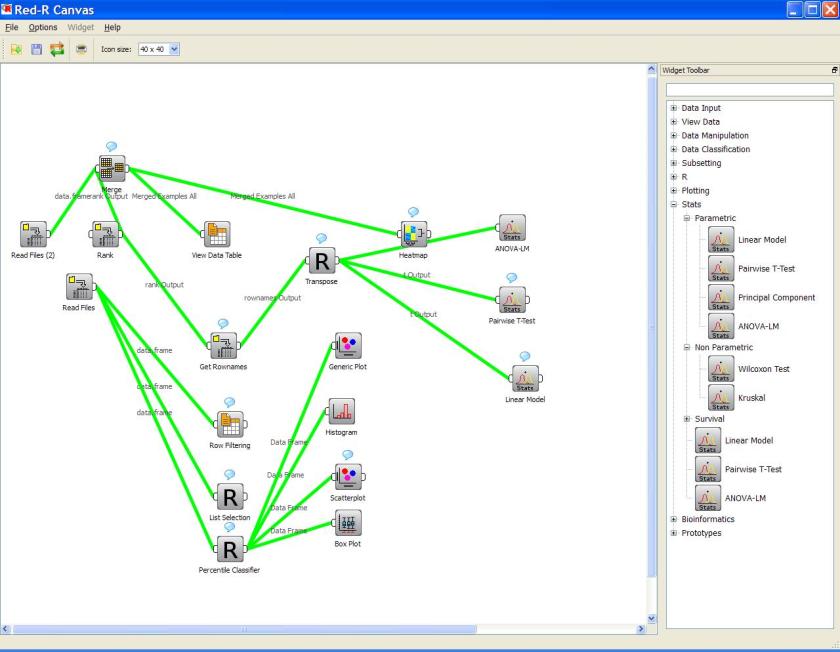

However two R GUI like Rattle and R Commander can help the execution time for this learning.

And increasingly R is taught in universities which is where the battle for future developers or users for platforms like SAS , SPSS , Stata or R would ultimately be decided while the short term monetization of other softwares dazzles people R has too many passionate developers or users to allow it to fail.

However,

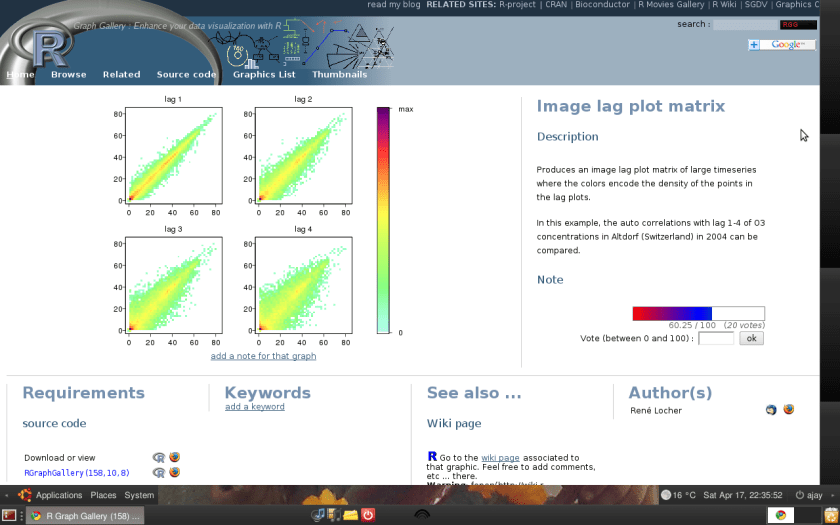

R is not perfect. It does need a better corporate version than is currently offered especially to people who are simple users not developers , and it could also to well to better the marketability and visibility of R.

Regarding software costs, ironically while it is easier to estimate how much SAS will cost you in terms of licenses and training time. A similar comparitive document between R and SAS in terms of costs and estimated training costs etc should settle this debate more rationally and more dispassionately than is currently the norm in comparing softwares