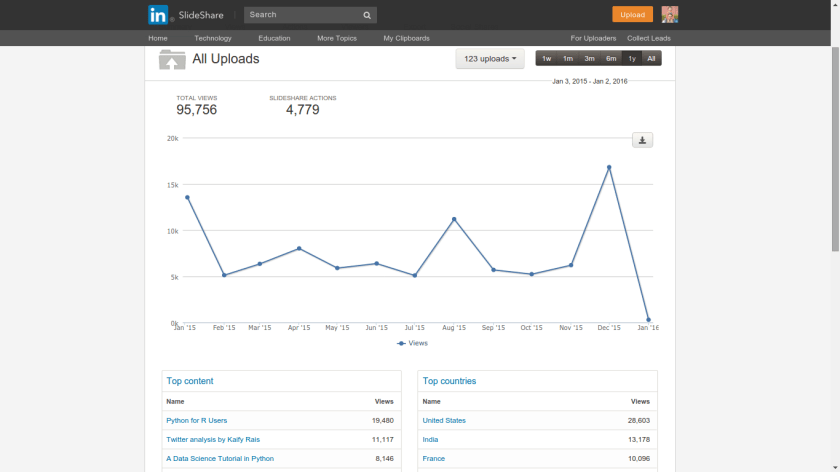

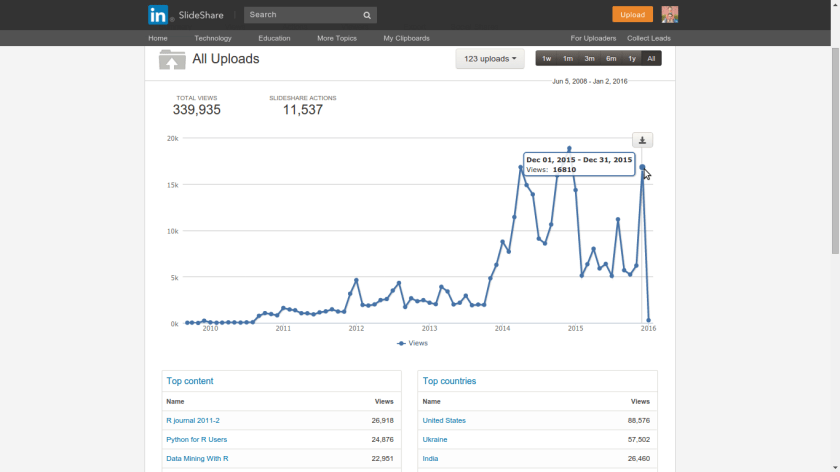

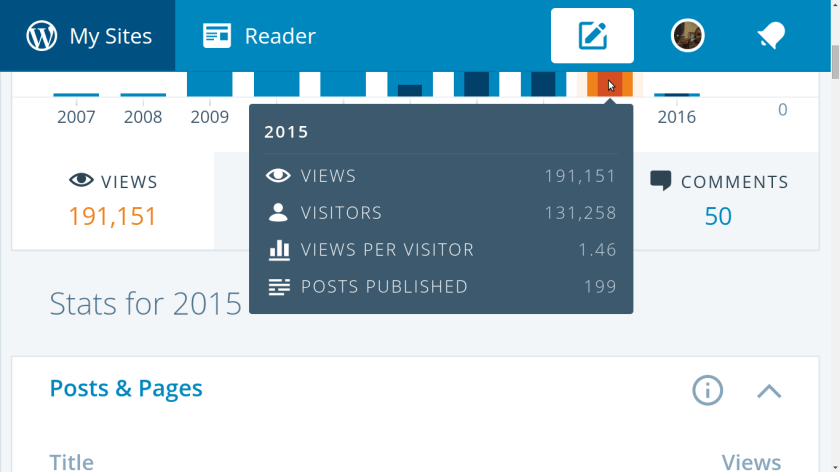

Does the NSA track Git requests. I mean can’t the terrorists just be talking to each other by Visual Cryptography of Arabic through Git Repo requests.

Basically increase the cost of decryption.

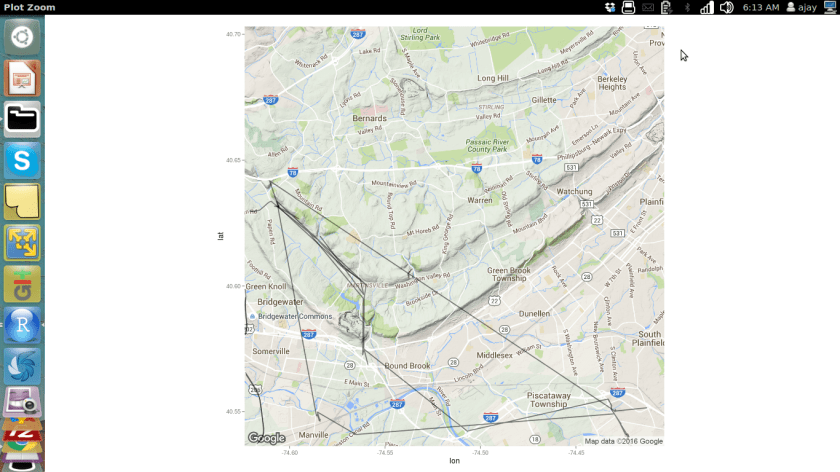

![]()

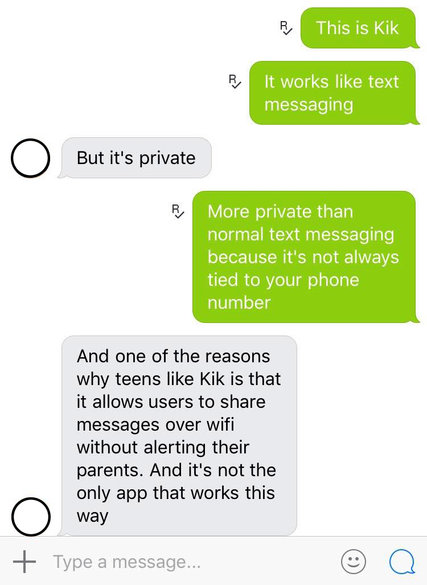

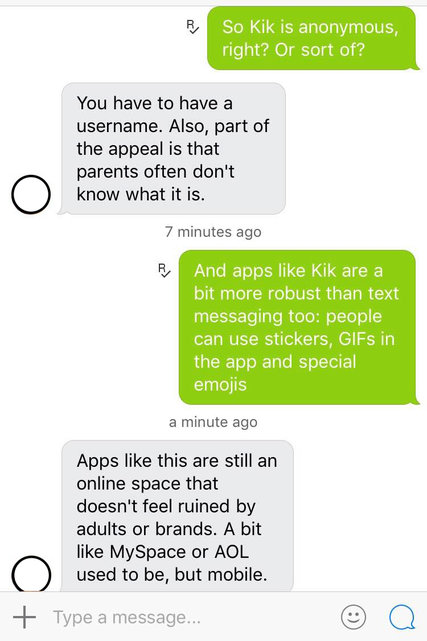

This is Visual Cryptography. Now Imagine using a one time pad codebook of just emojis and talking through mobile and Kik.

Etherpad is a highly customizable Open Source online editor providing collaborative editing in really real-time

Think of it as Kik for laptops.

Unfortunately NSA killed Waste Again

What is “WASTE again”?

“WASTE again” enables you to create a decentralized and secure private mesh network using an unsecure network, such as the internet. Once the public encryption keys are exchanged, sending messages, creating groupchats and transferring files is easy and secure.

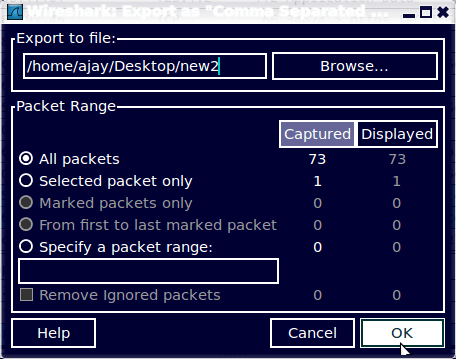

Creating a mesh

To create a mesh you need at least two computers with “WASTE again” installed. During installation, a unique pair of public and private keys for each computer is being generated. Before the first connection can be established, you need to exchange these public keys. These keys enable “WASTE again” to authenticate every connection to other “WASTE again” clients.

After exchanging the keys, you simply type in the computers IP address to connect to. If that computer is located behind a firewall or a NAT-router, you have to create a portmap first to enable incoming connections.

At least one computer in your mesh has to be able to accept incoming connections, making it a “public node”. If no direct connection between two firewalled computers can be made, “WASTE again” automatically routes your traffic through one or more of the available public nodes.

Every new node simply has to exchange keys with one of the connected nodes and then connect to it. All the other nodes will exchange their keys automatically over the mesh.

Google Drive safety for government as well as corporate espionage.

Post Snowden- we know that that company cofounded by a Russian Jewish immigrant went back on those very core values and helped create a benign version of the KGB. So you run away from Russia because hey the State is monitoring everything and then you refuse to stop your company from helping the new country’s government to do exactly the same. Google’s post snowden loss of credibility should make businesses think whether Microsoft Exchange servers are more secure than Google’s. Atleast Microsoft offers no – Dont be Evil Hypocricy”

Chinese hackers are just a Google bogey. Pakistani Intelligence ISI helping ISIS with ideas and consulting is what is the real threat. Too bad, we were too busy creating algorithms to click more ads.

The TOR- ONION Project is more like the ONION comedy thanks to its proliferation of NSA nodes.

The Tree of Liberty needs to be watered with the blood of patriotic cryptographers not accepting easy money but staying true to the ideals of a public free from espionage from Government with the fourth amendment rights secured digitally as well.